AI is often discussed through the lens of algorithms, neural networks, or foundation models. However, as the scale and economic importance of AI systems continue to grow, it has become increasingly clear that the technology cannot be understood purely as software. In a recent articulation of how the industry is evolving, NVIDIA proposed a conceptual framework in a blog post “AI is a Five Layer Cake,” which frames AI as a vertically integrated industrial system rather than a standalone technological capability.

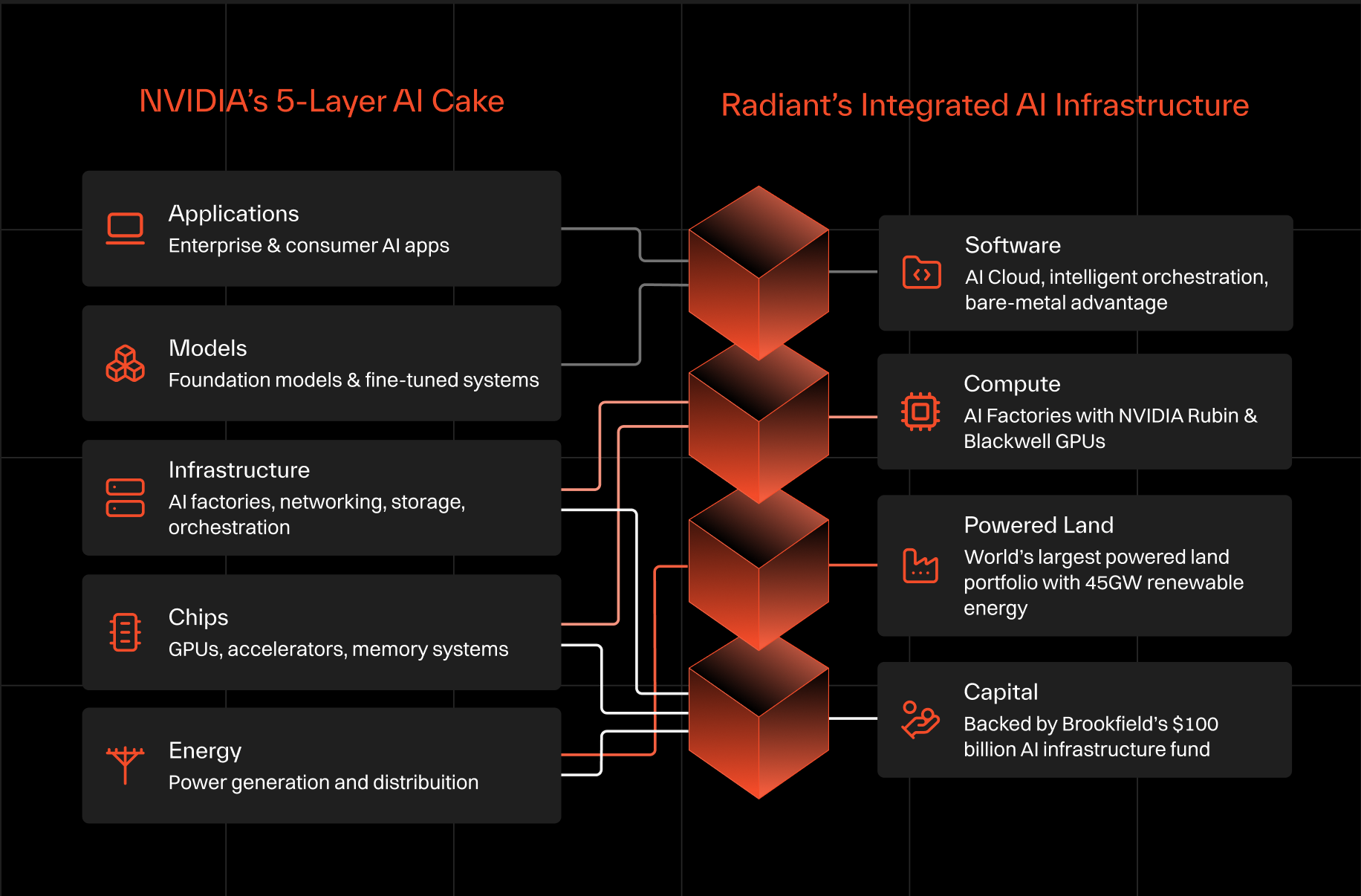

At its core, the model argues that AI is the result of a layered production pipeline in which electricity is transformed into intelligence through successive stages of hardware, infrastructure, and software. The five layers identified by NVIDIA: energy, chips, infrastructure, models, and applications, together represent the full lifecycle of how intelligence is created, deployed, and ultimately consumed.

When viewed through this lens, AI begins to resemble earlier industrial transformations such as electricity generation, telecommunications networks, and cloud computing. In each case, progress depended not only on technological breakthroughs but also on the coordinated development of physical infrastructure, supply chains, and capital investment. AI is now entering a similar phase.

Radiant’s infrastructure architecture, built around the four foundational pillars of Software, Compute, Powered Land, and Capital, provides an operational framework that aligns closely with NVIDIA’s conceptual model. While NVIDIA’s stack describes how AI is technologically structured, Radiant’s model explains how that stack can actually be deployed, scaled, and governed in the real world.

Understanding the 5 Layer AI Cake

NVIDIA’s model begins with a deceptively simple but powerful observation: every interaction with an AI system ultimately originates from electrons.

When a user sends a prompt to a large language model, that request triggers millions or billions of mathematical operations inside GPUs located within data centers. These GPUs consume electrical power, convert it into computational work, and process neural network layers that generate the response. From a systems perspective, the entire process can be understood as the conversion of electrical energy into structured intelligence.

The five layers of the NVIDIA’s AI cake represent the stages through which that transformation occurs:

All of these layers are interdependent. Models cannot function without infrastructure, infrastructure cannot operate without chips, and chips cannot run without electricity. All of it requires capital - and lots of it. The stack therefore behaves less like a software ecosystem and more like a production chain. This shift in perspective helps explain why the AI industry is increasingly focused on topics that previously belonged to energy markets, semiconductor manufacturing, and large-scale infrastructure finance.

AI is an Industrial System

The rapid expansion of AI workloads is forcing the industry to confront physical constraints that were largely invisible during earlier waves of cloud computing. Training frontier models requires tens of thousands of GPUs operating simultaneously, and inference workloads serving global applications may involve hundreds of thousands of accelerators distributed across multiple regions.

These deployments require enormous amounts of electrical power, sophisticated cooling systems, high-speed networking fabrics, and long-term capital investment. In other words, AI systems are beginning to resemble industrial production facilities, often referred to as AI factories.

The implications of this shift are profound. In the past, technological advantage in AI might have been determined primarily by algorithmic innovation or access to proprietary data. Today, it increasingly depends on who can design, finance, and operate the infrastructure required to sustain massive computational workloads.

This is where Radiant’s infrastructure model becomes relevant.

Radiant’s Four Pillars of AI Infrastructure

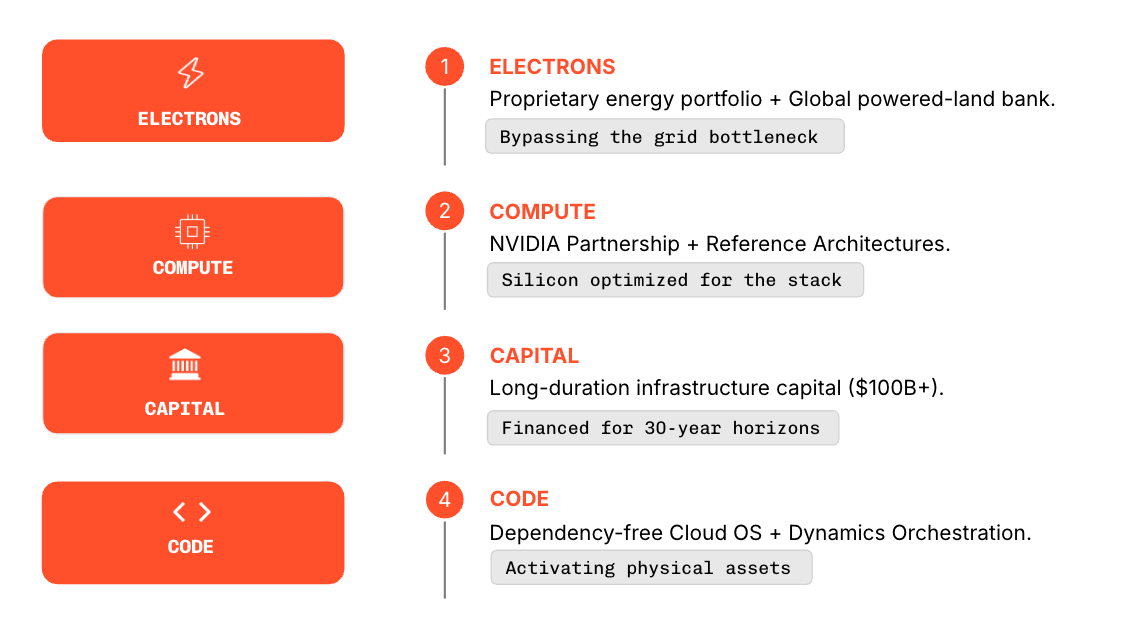

Radiant approaches the challenge of building AI infrastructure through a framework that focuses on the essential capabilities required to deploy intelligence at scale. Rather than describing AI purely in technological layers, we organize the system around four foundational pillars. Together, these pillars form an integrated architecture that enables the construction and operation of large-scale AI factories capable of supporting national and enterprise workloads.

AI Factories need more than GPUs. They require power, land, capital discipline and software control, all designed together and delivered together.

Software: The software layer provides the operational foundation that allows AI workloads to run efficiently across large clusters of compute infrastructure. Radiant’s AI platform functions as a sovereign control plane that orchestrates workloads, manages infrastructure resources, and enables organizations to train, fine-tune, and deploy models in secure environments.

The platform includes capabilities such as intelligent scheduling, model registry, fine-tuning pipelines, and secure multi-tenancy that allow multiple teams or departments to operate on the same infrastructure while maintaining strict isolation and governance controls.

Compute: The compute pillar represents the computational engines that perform AI workloads. Radiant supports a truly multi-vendor ecosystem of GPUs and accelerators, high-performance networking, and large-scale storage systems that are designed to operate at hyperscale.

These clusters form the computational core of AI factories, enabling organizations to train foundation models, run large inference workloads, and support advanced AI applications across multiple sectors.

Powered Land: While compute clusters often receive the most attention in discussions about AI infrastructure, they cannot exist without the physical facilities that house them. Radiant addresses this requirement through its powered land strategy, which focuses on securing sites with reliable grid connectivity, engineered cooling capacity, and infrastructure optimized for high-density GPU deployments.

By securing power access and designing facilities specifically for AI workloads, Radiant ensures that compute infrastructure can be deployed quickly without encountering the grid bottlenecks and permitting delays that increasingly constrain data-center development.

Capital: A buildout spanning decades requires patient capital, not debt financing at unsustainable interest rates. Large-scale AI infrastructure benefits from substantial long-term financing aligned with the lifecycle of physical assets such as data centers, networking equipment, and compute clusters. Radiant integrates infrastructure financing directly into its deployment model, enabling customers to access hyperscale AI capacity without assuming the capital burden of building facilities themselves.

Translating the Five Layers into Tangible Infrastructure

When NVIDIA’s conceptual stack is placed alongside Radiant’s infrastructure model, a clear correspondence emerges between the layers of AI production and the pillars required to deploy them.

Hyperscale, Engineered as a System

Hyperscale is not only defined by the number of GPUs deployed, but also by the ability to deliver and operate large-scale infrastructure without constraints. Radiant approaches hyperscale as a system, integrating powered land, compute, capital, and software into a single delivery model that removes the fragmentation inherent in traditional deployments.

Rather than assembling infrastructure across multiple vendors and timelines, Radiant provides the shortest path from site to live infrastructure. Powered land is secured with grid interconnection, substations, and energization schedules aligned upfront. GPU systems are deployed on standardized, NVIDIA-aligned architectures, while high-density cluster designs and liquid-cooling envelopes ensure sustained performance at scale. Each deployment is commissioned end-to-end, with capacity validated under load before handover, ensuring systems are production-ready from day one.

Our data centers across the globe are equipped for hyperscale deployment. With power and site readiness established in advance and infrastructure components aligned to a single execution plan, AI factories can be brought online in quarters rather than years. Radiant’s architecture is built for continuous scale. Clusters are designed to operate as cohesive systems across tens of thousands of GPUs, with consistent performance maintained through tightly integrated networking, cooling, and control layers. Modular expansion allows capacity to grow in phases without re-architecting the underlying system, preserving efficiency as deployments scale.

Operationally, the model extends beyond deployment into sustained reliability. From factory burn-in and synthetic workload validation to SLA-driven operations and telemetry-driven management, infrastructure is continuously monitored and optimized. This ensures that hyperscale is not only achieved, but maintained with predictable performance over time.

Conclusion

NVIDIA’s five-layer model explains how AI is structured. The next step is making that structure real. Radiant does this by bringing power, compute, software, and capital into one coordinated system from the start. Instead of stitching together multiple vendors and timelines, the entire stack is aligned to deliver capacity that is ready to use, when it is needed.

This changes how AI is deployed. Build cycles become predictable. Capacity comes online in step with demand. Infrastructure behaves less like a project and more like a system that can be relied on.

The shift underway is simple, AI infrastructure is moving from cluster-scale to vertically integrated AI factories, and Radiant is designed for that shift.