AI infrastructure has outgrown the grid.

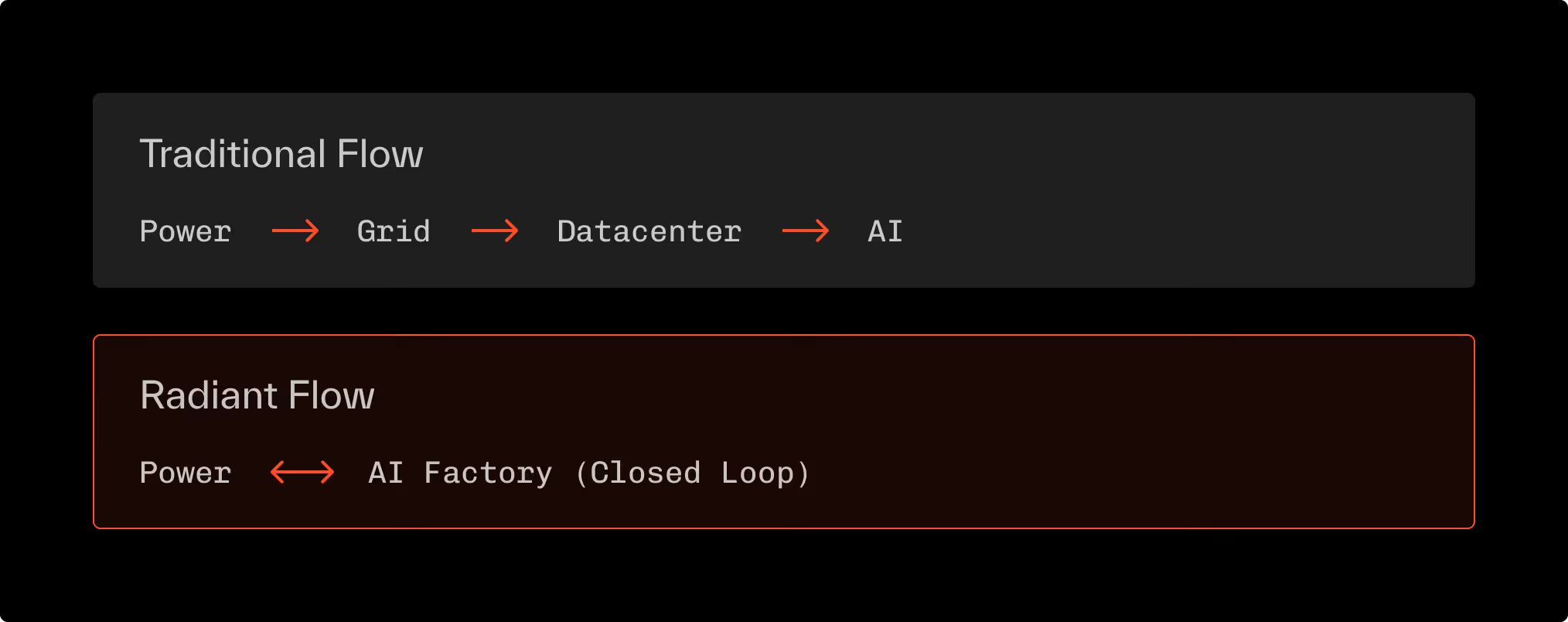

For more than a decade, datacenters have been built downstream from power—chasing electrons through congested public transmission networks never designed for 100-megawatt clusters.

This approach is now the primary constraint on the global AI economy.

Every bottleneck, power price volatility, interconnection delays, stranded capacity traces back to one architectural error: we move power to compute instead of compute to power.

Radiant’s thesis: Abundance begins when compute meets generation.

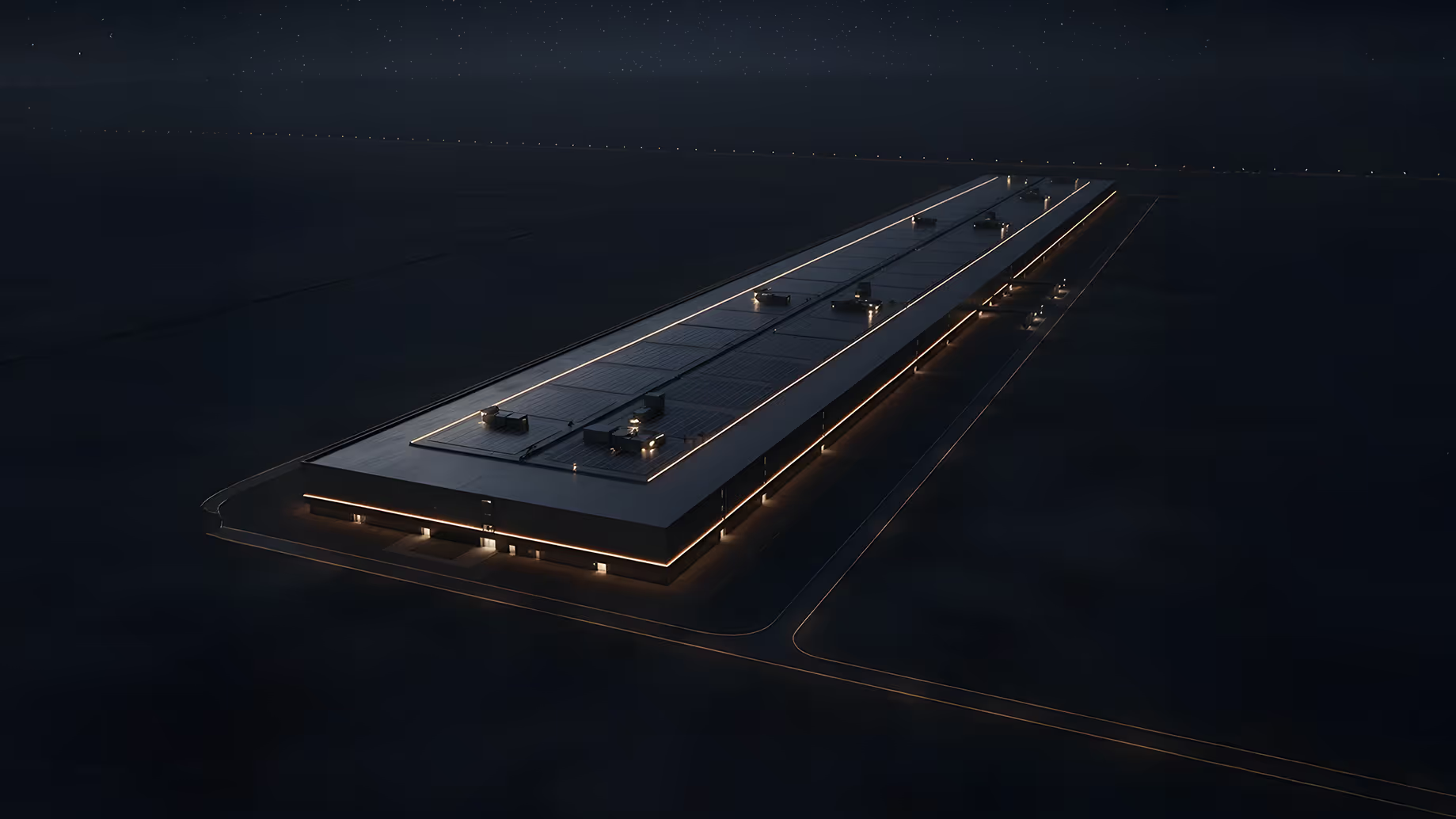

Our behind-the-meter model co-locates AI Factories directly with massive-scale hydro, wind, solar, or nuclear generation.

This is not an optimization of the datacenter; it is a re-architecture of the entire supply chain.

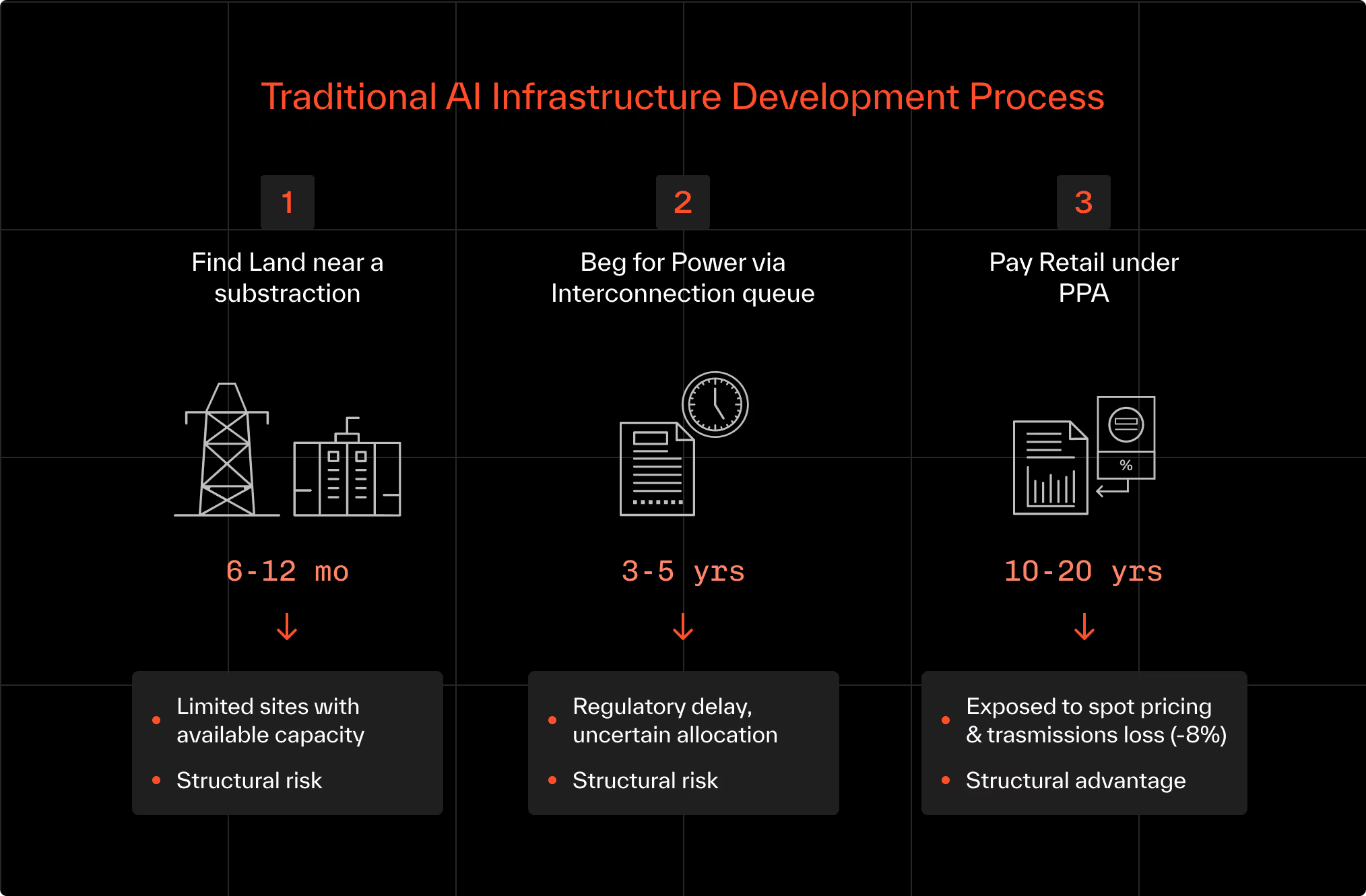

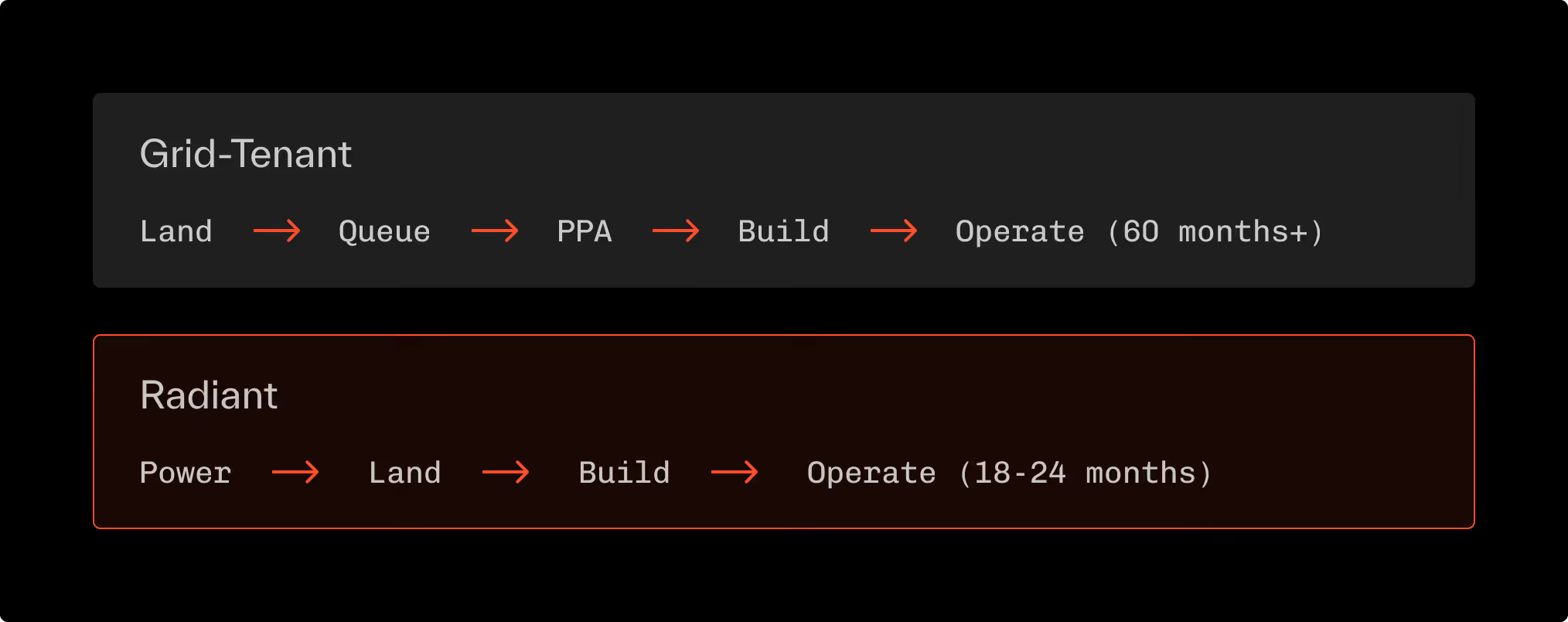

The Grid-Tenant Model (The Old Way)

The existing “neo-cloud” paradigm treats the grid as the delivery system for power.

Datacenters are, with a handful of exceptions, tenants of a public utility, not participants in energy production.

Process Flow:

Financial Anatomy of the Grid-Tenant Model

A tenant cloud therefore pays 60–70 % more than the raw generation cost of power.

When power is 30–40 % of AI operating expense, this is terminally inefficient.

More critically, the timeline is incompatible with AI growth curves.

Compute demand doubles roughly every 5-6 months; grid capacity expansion moves in 10-year planning cycles.

The end result is simple, a widening structural gap between power availability and model ambition.

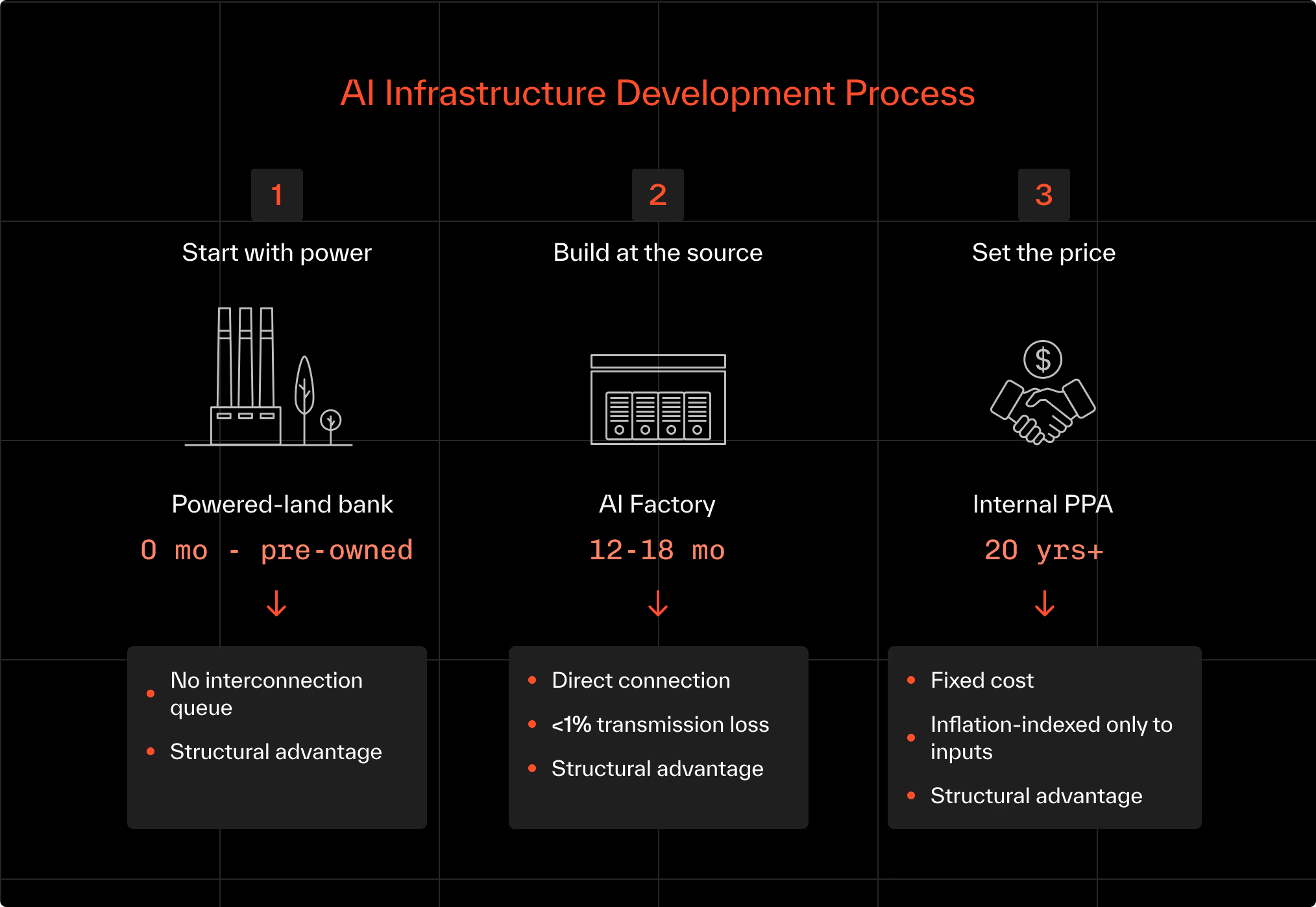

The Behind-the-Meter Model (The Radiant Way)

Radiant inverts the logic. We operate as an energy owner, not a tenant.

Through Brookfield, we build and own the generation first - then integrate compute directly at the source.

Process Flow:

The difference is not incremental efficiency; it is systemic leverage.

When power is internalized, compute economics compound.

The Three Structural Advantages

Velocity — Time to Intelligence

By starting with generation, we bypass the interconnection queue entirely.

The development clock runs in parallel instead of series.

Deployment Timeline Comparison

Cost — The Structural Advantage

Owning power removes the grid’s 60–70 % markup and hedging costs.

It converts variable Opex into predictable Capex.

Delivered Power Cost (illustrative)

Result: ≈ 50 % reduction in delivered power cost, directly lowering cost-per-training-run and enabling fixed-price compute contracts that competitors cannot match.

Reliability — Utility-Grade Availability

Public grids are exposed to congestion, weather, and geopolitical risk.

By owning generation and micro-grid infrastructure, Radiant achieves Tier IV equivalent reliability (four-nines reliability) at the facility level.

Exhibit 5 | Sources of Failure Eliminated

An AI Factory that controls its own power behaves like a power plant: always on, always priced.

The Architectural Shift

AI Factories are no longer just datacenters with GPUs.

They are industrial consumers of energy that must be designed as extensions of generation.

Exhibit 6 | Energy-First Architecture

When compute meets generation, latency, cost, and carbon all collapse.

Every watt travels meters, not miles. Every dollar of Capex produces both electricity and intelligence.

Conclusion

The future of AI infrastructure belongs to those who treat power as the primary design variable—not an external dependency. By moving compute to power, we move AI from scarcity to abundance, from volatility to utility.