The appetite for large-scale GPU clusters is now outpacing the physical world’s ability to deliver them. While hardware procurement remains a challenge, a deeper constraint is emerging at the infrastructure layer. Building AI factories, ensuring regulatory compliance, securing reliable power connections, and expanding the energy capacity required to operate them are becoming increasingly complex and slow. Furthermore, the long lead times for mechanical and electrical equipment such as generators, transformers, switchgear, UPS, and chillers are adding to the complexity and delays.

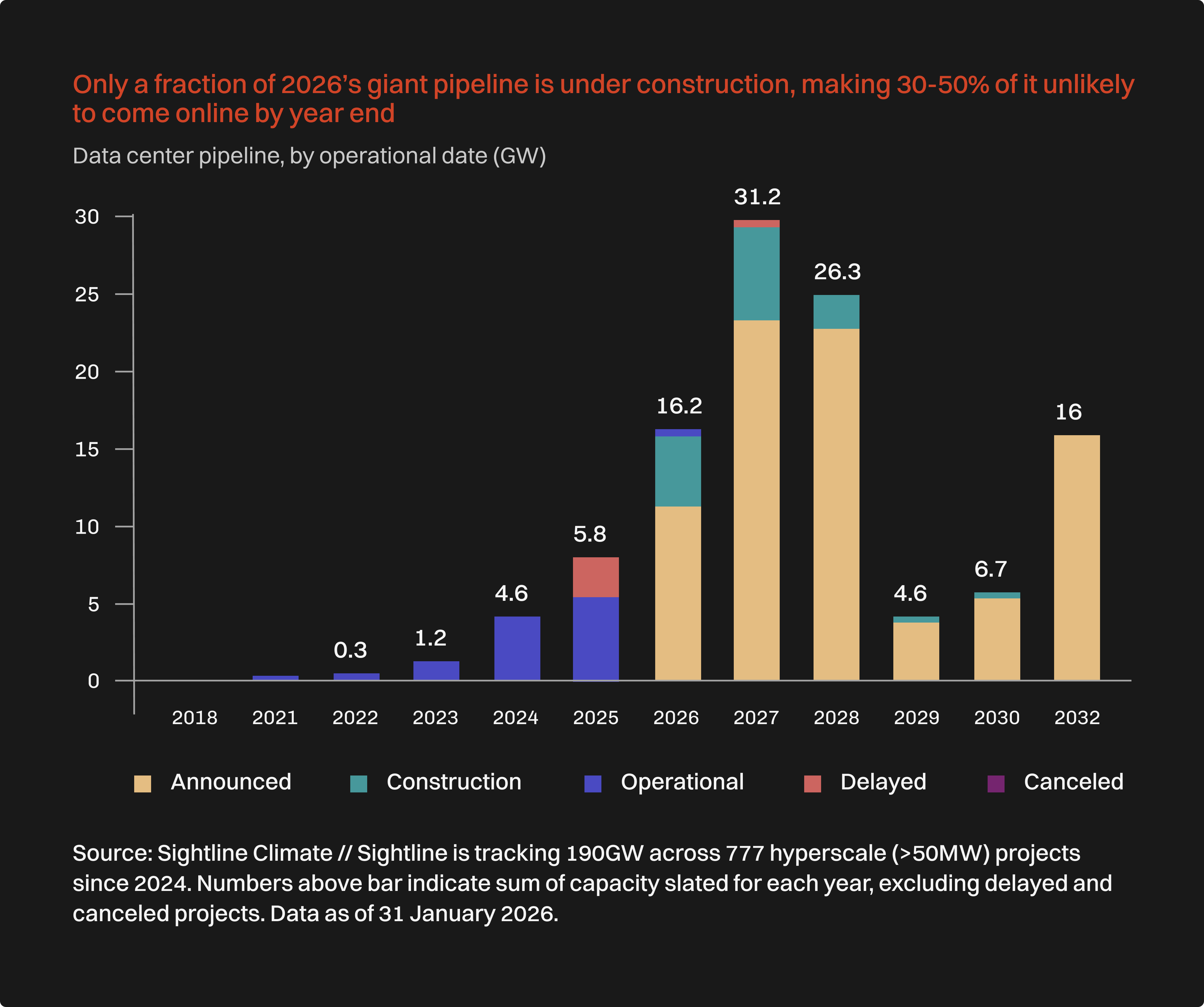

The data paints a stark picture. Morgan Stanley Research projects that U.S. data center capacity demand will hit 74 GW by 2028, with a projected shortfall of about 49 GW in available power access. As the image below shows, about 30-50% of large data centers and new AI factories scheduled to come online in 2026 are delayed, mainly due to power bottlenecks.

Even industry leaders are sounding the alarm. NVIDIA CEO Jensen Huang recently highlighted that building large AI data centers in the U.S. can take around three years from start to finish, largely due to long permitting processes, environmental reviews, and grid upgrades. He emphasized that infrastructure speed, how quickly countries can get AI factories up and running, is becoming the true competitive advantage in the global AI race.

Nations building sovereign AI, enterprises building AI agents and labs training foundation models, are all increasingly paralyzed by this energy scarcity, grid connection backlogs, and protracted land permitting cycles, extending deployment horizons to 48 months or longer. The electrical grid itself is the primary bottleneck. The prevailing sequential approach of leasing power, piecing together hardware, and licensing software is incapable of delivering the scale and velocity demanded by the largest high-performance computing infrastructure build-out in history. To condense years of infrastructure delays into mere months, the industry must pivot to a vertically integrated utility model.

The Economics of AI Factories

The other key driving factor in the AI infrastructure buildout is the huge CapEx investments needed to set up AI factories. Capital structure dictates outcomes. Radiant operates with infrastructure-grade capital, generally high single digit cost of capital, a stark contrast to the industry's 20%+ average financing rates for data center developers. This massive differential compounds significantly over decades, facilitating fixed-price agreements, proprietary power generation, and long-term commitments is enabling Radiant to make AI infrastructure plentiful and inexpensive.

The lower cost of capital is critical for funding the massive, long-term investments required for modern AI infrastructure. By securing infrastructure-grade financing, Radiant is positioned as a utility provider rather than a technology reseller, which allows for stable, long-duration commitments with customers and suppliers. This stability translates into predictable operating expenses and eliminates the cyclical capital risk associated with conventional, higher-cost financing models. This structural advantage permits Radiant to absorb power and land acquisition costs upfront, delivering a fully operational solution with a single, predictable price point for the customer.

By commanding the entire stack, backed by Brookfield's $100 billion infrastructure fund and expansive powered land portfolio to NVIDIA-certified accelerated computing and bespoke software, we circumvent the grid bottleneck entirely. This vertical integration directly yields significantly lower power costs and the capability to deploy sovereign AI factories in a fraction of the conventional timeframe.

This vertical integration is the core mechanism for value creation. By controlling the site, power, and compute orchestration, Radiant eliminates the margin stack of disparate third-party vendors and the associated delays of coordinating multiple contracts. The reduction in power costs is a direct result of proprietary power generation and securing established interconnection rights, which avoids transmission fees and congestion charges typical of grid-dependent facilities. Furthermore, integrating capital, land, and compute deployment under one entity transforms a series of sequential, high-risk delays (permitting, interconnection, procurement) into a parallelized, low-risk process, accelerating time-to-revenue for customers by several years.

From Sequential Delays to Parallelized Delivery

The protracted timeline for AI infrastructure deployment is not a matter of a single failure point, but a result of a fundamentally flawed, sequential process. The conventional greenfield build is a chain of high-risk dependencies: land must be acquired, permitting must precede power, which must precede site construction, which must precede hardware procurement and commissioning. This prevailing method is inherently bottlenecked and is incapable of delivering the scale and velocity demanded today.

Radiant fundamentally rewrites this deployment schedule by transforming a series of sequential risks into a parallelized, low-risk process. By operating as a single counterparty that commands the entire stack, Radiant eliminates the delays and complexity of coordinating disparate third-party vendors.This structural integration is the core mechanism that compresses years of conventional infrastructure latency into mere months, delivering a production-ready AI factory in around 12 months. We do not wait for the grid; we incorporate power and land into our delivery, turning deployment velocity into a definitive strategic advantage.

Deployment Velocity as a Strategic Advantage

When you control both the power and the land, the resulting deployment velocity transforms into a strategic advantage. Radiant's behind-the-meter architecture bypasses interconnection queues and shields against price volatility. By securing powered land with established interconnects rather than mere zoning approvals, and by commissioning the hall, power, and cooling systems before the hardware even arrives, ensuring that AI workloads are ready the moment the GPUs are on-site. Our power strategy ensures that grid, water, and cooling risks are absorbed by Radiant, rather than being offloaded onto the customer.

Here’s a snapshot of Radiant’s deployment strategy when compared to traditional data center builds:

The Power Problem (And How Radiant Solves It)

Power is the most underestimated constraint in AI infrastructure. A single large AI training cluster can require 50–100 MW of power, equivalent to the consumption of a small city. Yet grid interconnection timelines in many markets now exceed three years. Radiant addresses this challenge by starting infrastructure design with power rather than compute.

A quick overview of the Radiant power deployment playbook:

Structurally Superior: Purpose-Built Facilities for High-Density Compute

Speed to market is only one part of the equation; the underlying infrastructure must also be equipped to handle the next generation of AI compute. Conventional data centers are often retrofitted for AI, struggling to dissipate the thermal density generated by modern GPUs. Radiant facilities, however, are purpose-built for AI from inception.

Radiant’s AI data centers are engineered specifically for NVIDIA's latest GPU generations, Blackwell and Rubin. By employing a liquid-first cooling strategy that captures 90% of the rack heat via direct-to-chip cooling, we help model-training clusters scale linearly across thousands of GPUs without encountering thermal throttling. This energy efficiency approach is not merely about temperature control; it is about sustaining deterministic computational performance at hyperscale. Having built several GPU clusters for inference that are already running in production, Radiant combines deep expertise across compute, storage, networking, and intelligent orchestration to deliver even the most demanding AI experiences with the highest performance.

Software Intelligence at Industrial Scale

Infrastructure devoid of intelligent orchestration is mostly static capacity. Developed entirely in-house with zero external dependencies, Radiant's software platform achieves 85% GPU utilization while upholding sovereign-grade security through advanced AI workload orchestration.

Our streamlined architecture scales seamlessly from single racks to deployments exceeding 100,000 GPUs, maintaining operational autonomy throughout. This ensures that compute is not only deployed more rapidly but is also utilized with peak efficiency for both training and AI inference tasks. We merge the massive industrial infrastructure of gigawatt portfolios with a developer-centric software experience, featuring instant provisioning, transparent pricing, and bare-metal performance.

Unlock Speed and Scale for the Next Wave of AI Innovation

The decisive edge in the era of advanced AI rests not on simply having more compute, but on the swiftness and long-term reliability of infrastructure delivery. As conventional data center build-outs face debilitating, multi-year delays from power and regulatory challenges, a fundamental paradigm shift is required.

Radiant delivers this new infrastructure model by transforming a high-risk, sequential process into a streamlined, low-risk deployment achievable in months. Our integrated deployment bypasses critical bottlenecks, locks in stable, long-duration commitments, and establishes a true utility partner for global AI deployment. Ultimately, this unique framework removes the structural impediments of the past, ensuring that AI compute becomes a readily available resource, delivered with unmatched efficiency and sovereign-grade partnership.

.png)

.png)