The research consensus on sovereign AI is forming fast. Five of the world's most credible institutions have now weighed in - McKinsey, the Tony Blair Institute, Oxford University, the World Economic Forum, and the Brookings Institution. They agree on more than they disagree. But they all stop at the same place: the edge of actual execution. This is where the conversation needs to go next.

The Research Consensus That Misses the Point

Sovereign AI is now a strategic priority across governments and industry. Across major research bodies, there is broad agreement on three points:

- AI infrastructure is economically and geopolitically consequential

- Most countries remain structurally dependent on external providers

- Current approaches leave meaningful gaps in autonomy

Where the literature is less developed is at the level of operational execution. It describes what sovereign AI should achieve, but not the conditions under which it functions independently.

This is the literature and it is worth being in your library if this is subject matter in which you are invested:

- McKinsey - sovereign AI is now treated as a strategic or existential priority among decision-makers

- Tony Blair Institute - AI as governing infrastructure shaping state capability

- Brookings Institution - sovereign AI as a dimension of geopolitical competition

- World Economic Forum - AI as a driver of national productivity and influence

- Oxford Internet Institute - structural concentration of compute and limited global distribution

These analyses converge on diagnosis. They are less explicit on operational design.

The Three-Level Framework and the Level It Missed

The Oxford paper's central contribution is a three-level framework for measuring compute sovereignty:

Level 1 - Territorial: Does AI compute physically exist within your borders?

Level 2 - Provider Nationality: Is the cloud provider operating that compute domestically owned?

Level 3 - Accelerator Vendor: Do you have access to chips from domestic suppliers?

Singapore hedges between US and Chinese providers with exact parity - three cloud regions from each - while Japan, Australia, and Israel are fully aligned with US providers. Conversely, Oxford’s census of nine leading public cloud providers found Chile, Indonesia, and Thailand were aligned with China.

But the framework has a critical omission. It describes what you own. It says nothing about what you control.

A country can score well across all three levels and still be operationally dependent on a foreign entity the moment it tries to run a workload. Because sovereignty does not reside in the data center. It resides in the control plane.

This requires an additional level.

Level 4 - Operational Control: Can the AI infrastructure within your jurisdiction operate at full capacity with zero outbound connectivity to foreign infrastructure?

This is the dimension that determines whether the other layers translate into actual operational independence. It is also where many sovereign AI strategies remain under-specified.

Operational control includes scheduling, identity, policy enforcement, and system lifecycle management - the functions that determine what runs, where, and under whose authority.

The Anatomy of the Sovereignty Gap

The Brookings Institution's February 2026 report notes that dependencies "compound across layers" and that "absolute control is structurally infeasible." This is correct - and it means that sovereign AI has always required a phased approach. The strategies most governments have pursued to date were rational starting points. The problem is not that they were wrong. The problem is that they were first steps being treated as complete solutions.

The Tony Blair Institute captures this precisely. It warns that technology firms increasingly offer "compliance wrappers" that "simulate autonomy while deepening dependency" - not through bad faith, but because the market has been rewarded for solving the data residency problem, not the operational independence problem.

That gap is now visible. Here is where it shows up in practice.

Starting with Hyperscaler Infrastructure. Establishing a sovereign cloud region with a major provider was, for many governments, the fastest credible path to in-country AI compute. It delivered data residency, local jurisdiction, and national employment - real value, delivered quickly. The structural limit it reaches: the software that schedules jobs, manages encryption keys, authenticates users, and patches the operating system remains a service operated by the vendor. The control plane - the system that decides what runs, where, and under whose authority - is typically still governed from outside the country. This works well for commercial workloads. For mission-critical government AI, it means operational continuity depends on a continued vendor relationship.

Building National Compute Capacity. Procuring large-scale GPU clusters was the right signal to send - to domestic industry, to international partners, and to the workforce that sovereign AI was a genuine national priority. For many programs, it was the necessary first investment. The system hits a structural limit when raw compute is deployed without a sophisticated operating system for workload orchestration, fractional sharing, and resource reclamation. Industry experience suggests that these unmanaged environments produce utilization rates that can fall well below 50 percent. McKinsey's research identifies this directly, noting that "many sovereign AI initiatives are stalling and failing to deliver their expected results." Compute capacity is necessary but not sufficient. The management layer that governs how that capacity is used is equally important.

Investing in Domestic Cloud Providers. Supporting national cloud vendors was, and is, sound industrial policy - building domestic capability, reducing dependence on foreign operators, and creating a local expertise base. Many of these providers are now delivering real value for inference workloads and public sector applications. They are also hitting structural limitations. Most were built on general-purpose data center infrastructure, operating at 10–15 kW per rack when modern AI training workloads require 30–60 kW per rack. Their networking is typically standard Ethernet at 1–10 Gbps when distributed training requires RDMA-capable fabrics at 200–400 Gbps. For the inference and application workloads they are often the right answer. For national programs requiring foundation model training or large-scale research infrastructure, they are approaching the edge of their design envelope.

Establishing Governance Frameworks. Comprehensive AI regulation was a legitimate and necessary exercise of sovereignty - asserting national values, setting standards, and shaping how AI systems operate within a jurisdiction. Several of these frameworks are now global benchmarks. The structural limit they reach: regulatory authority over AI systems does not automatically confer operational independence from the infrastructure those systems run on. The European Union's AI Act is among the world's most sophisticated governance instruments. European organizations still run more than 80 percent of their technology stack on imported infrastructure. Governance and operational independence are complementary goals - but they require separate strategies.

None of these approaches failed. Each delivered what it was designed to deliver.

What has changed is the definition of the problem. The first generation of sovereign AI strategy addressed presence: getting compute in-country, establishing national providers, asserting regulatory authority. The next generation has to address control - ensuring that the infrastructure operating within national borders can actually be operated, governed, and sustained by national institutions under all conditions.

The table below maps what each approach provides and where the next layer of work begins.

What Sovereignty Actually Requires: First Principles

To reason clearly about this, we need to be precise about what AI infrastructure consists of and where operational power actually resides.

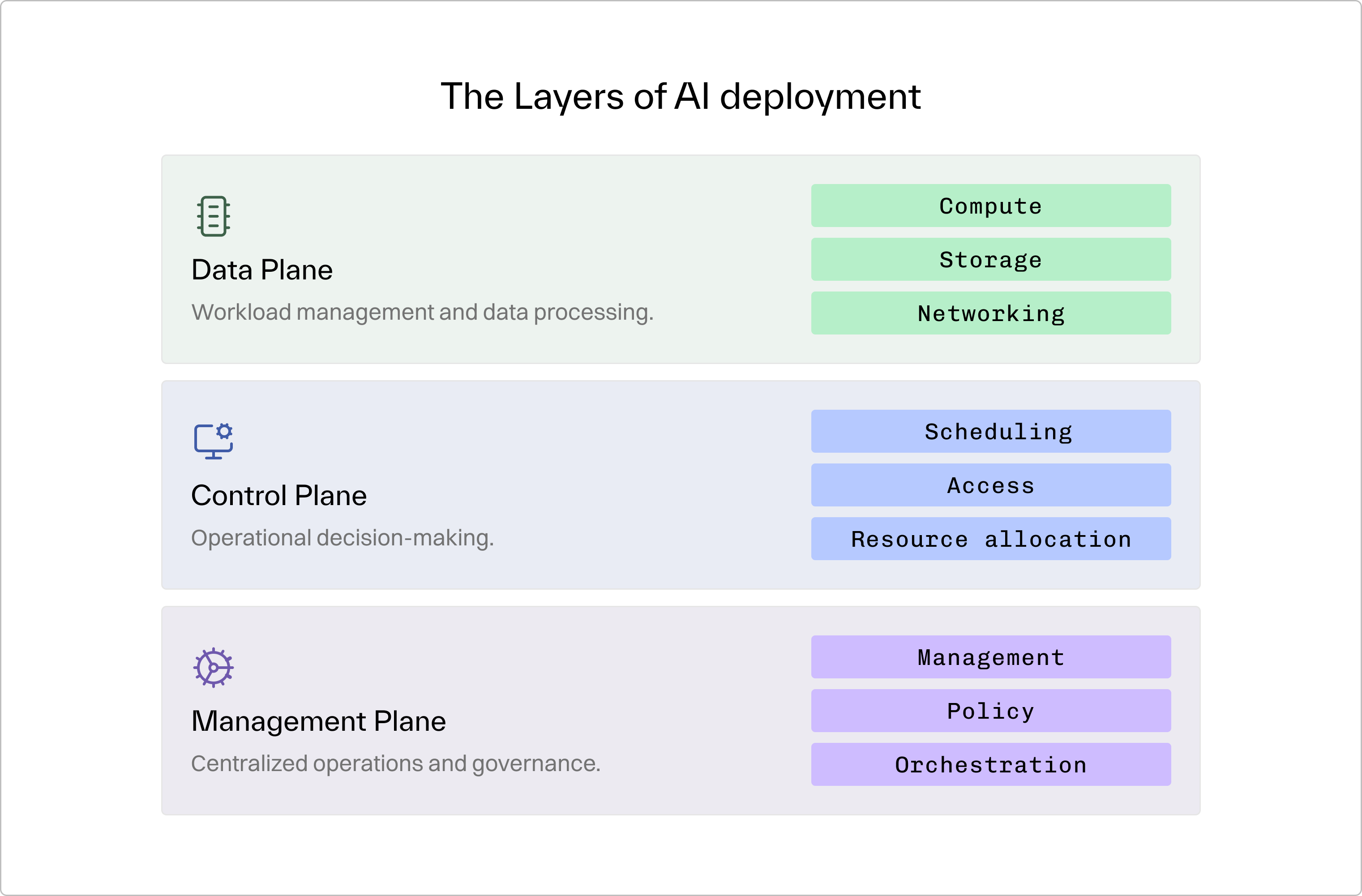

Every AI deployment has three distinct layers:

This is the critical insight from the research: you can own the data plane entirely - you can hold the deed to the building and the title to the hardware - and still have zero operational sovereignty if the control plane or management plane depends on infrastructure outside your jurisdiction.

These dependencies take specific, auditable forms:

- License or entitlement validation calls to vendor servers

- Identity or token issuance from external identity providers

- Telemetry and metrics pipelines bound to vendor SaaS dashboards

- Remote configuration or optimization services controlled from vendor infrastructure

- Upgrade and patch orchestration requiring connectivity to vendor systems

- Container or model artifact registries not fully mirrored locally

- Key management or metadata services hosted outside jurisdiction

- Vendor support tunnels required for administration or incident response

- Firmware and out-of-band management controllers (BMC/IPMI) operating below the OS layer and often opaque to audit

Failure is typically silent: scheduling degrades, tokens expire, and upgrades stall. Authentication tokens expire. Upgrades block. Management functions become unavailable. The hardware remains, but control shifts to whoever governs those services.

The defining test for genuine operational sovereignty is therefore simple and binary:

Can this system operate at full capacity with zero outbound connectivity to vendor infrastructure - without vendor intervention, without configuration workarounds, as a tested and supported operational mode?

Not "mostly yes." Not "yes, but with the following exceptions." Not "yes, with advance notice."

If not, sovereignty is conditional rather than absolute.

The Five Architectural Requirements

Genuine operational sovereignty requires satisfying five architectural conditions simultaneously.

1. Control Plane Domesticity

All job scheduling, resource allocation, and system orchestration must execute on hardware within your jurisdiction, under domestic legal authority. You do not need to own the software - you need domestic operational control over it. This means it runs on infrastructure you operate, under legal frameworks you govern, administered by personnel under your authority.

A government can purchase perpetual licenses to infrastructure software and still lack control if the operational systems remain in vendor hands. Conversely, a government can achieve genuine control through a managed services arrangement that includes contractual capability transfer - if, and only if, the transfer is real, timed, and measurably complete.

2. Zero External Dependencies

At full operational capacity, the system must generate zero outbound calls to infrastructure outside its jurisdiction. This means container images, model weights, and system dependencies are cached locally. External services such as updates, monitoring, and support are pull-based - you initiate contact - not push-based, where the vendor's systems reach into yours. License validation, telemetry, and authentication do not require external connectivity.

Power grids, financial clearing systems, and defense communications are all designed to operate in isolation.

3. Operational Independence

Your team - national citizens with domestic employment arrangements - can operate, upgrade, troubleshoot, and recover the system without vendor intervention. Identity management, policy enforcement, and audit logging run on local databases. The skills required to run the system at its full capability exist within your national workforce, not in a vendor's global SRE organization.

The Tony Blair Institute's research on talent and skills makes clear how scarce this capability is globally. Advanced AI infrastructure expertise is concentrated in a small number of firms and geographies.

4. Hardware Agnosticism

The architecture must accommodate substitution of GPU vendor, networking vendor, and storage vendor without requiring a full platform re-architecture.

GPU architectures evolve every two to three years. Any platform that is structurally coupled to a specific generation of hardware will require a fundamental rebuild at each refresh cycle rather than absorbing the upgrade gracefully. That coupling compounds into significant cost, downtime, and operational risk across whatever lifecycle the infrastructure ultimately serves.

As Brookings documents, advanced chip fabrication, lithography equipment, and GPU design are each concentrated in a small number of jurisdictions globally. Responsible infrastructure design - for AI or any other critical national system - accounts for concentration risk through architectural flexibility, regardless of how trusted today's suppliers are.

The best available accelerators should absolutely be deployed. It means the platform's orchestration layer abstracts the hardware interface cleanly enough that when the next generation arrives - or when procurement conditions shift for any reason - the transition is a configuration change, not a reconstruction project.

5. Auditability and Explicit Dependencies

Every component must be locatable. Every operator must be identifiable. Every data flow must be mappable. Regulators and national security authorities must be able to verify, not merely assert, that the system operates within its defined boundaries. External dependencies must fail visibly - with clear error states and explicit operational consequences - rather than silently degrading capability. Any hybrid or burst configuration that involves external connectivity must require explicit policy authorization rather than being the default behavior.

If you cannot demonstrate to a regulator exactly where every component runs, you cannot claim sovereignty.

The Economics Nobody Is Talking About

The policy literature on sovereign AI has extensively analyzed geopolitical dependencies and regulatory frameworks. It has almost entirely ignored how AI infrastructure is financed, and that omission materially shapes sovereign outcomes.

The traditional AI infrastructure supply chain extracts margin at every layer. When these stack, a government running AI on commercial cloud is effectively paying venture-capital returns on what should be utility-priced assets.

Critical infrastructure is financed at low cost of capital because assets are long-lived and demand is predictable. AI infrastructure shares these characteristics at the physical layer. The compute layer is less settled. Nobody yet knows whether GPU clusters follow a three-year consumer electronics cadence or a five year plus enterprise pattern. But the financing model should not be decided by that uncertainty. Infrastructure financing with defined refresh provisions is how every other critical infrastructure category handles technological evolution. Power plants add generation capacity without rebuilding the grid.

The question governments should be asking is not whether to partner with external providers, but what kind of partnership they are entering: one that builds domestic capability toward independence, or one that deepens dependency while calling itself sovereignty.

The Sovereignty Spectrum, Reframed as Architecture

Most frameworks describe sovereignty as a spectrum - from Oxford’s compute layers to McKinsey’s tiers and the Tony Blair Institute’s Control/Steer/Depend continuum. These approaches are directionally correct but tend to classify countries based on what they own.

A more useful lens is what happens when external conditions change.

Sovereignty is not a static property of asset ownership. It is a measure of resilience; what a system can continue to operate, sustain, or recover when vendor relationships shift, geopolitical alignment changes, or supply chains are constrained.

A country can own infrastructure and still be operationally dependent. Another can own little but have a clear path to independent operation through capability transfer and control over critical systems. Ownership provides assets. Operational independence provides agency.

Sovereignty is agency.

The architecture that follows is not a technical specification. It is what agency looks like in practice by prioritizing operational independence, resilience, and efficiency across power, compute, and control.

Power, as the Foundation

An AI factory at national scale is the most power-dense industrial facility of its type ever built. A single large-scale training run can consume the equivalent energy of a small city. Grid interconnection timelines in most countries run three to five years, longer than infrastructure refresh cycles, and legacy grid infrastructure was not designed to concentrate 500 MW at a single site.

The answer is behind-the-meter architecture: co-locating compute with dedicated generation, establishing a private electrical connection that bypasses shared grid constraints. The sovereign AI facility becomes its own energy customer, achieving utility-grade reliability and long-term price stability on a schedule that serves national priorities rather than grid planning cycles.

The WEF research identifies energy as a structural constraint for most economies' AI ambitions. The Tony Blair Institute notes that "countries enter this new landscape from profoundly different starting points" on energy. Norway's hydropower, France's nuclear capacity, and Middle Eastern solar potential are genuine structural advantages. But even energy-constrained nations can resolve this through co-located generation, grid hardening, and power purchase agreements structured as infrastructure financing rather than consumption costs.

Bare Metal, Not Virtualization

For sovereign AI deployments, bare metal is not a performance preference. It is a sovereignty requirement. Virtualization layers introduce external management dependencies and impose overhead that independent benchmarks have measured in the high-single to low-double digit percentage range, translating directly into wasted energy and capital. More fundamentally, bare metal enables hardware-enforced isolation across compute, storage, and networking. Security boundaries become properties of the physical system rather than software configurations.

A bare metal foundation does not imply rigidity. Modern bare metal platforms layer cloud-native AI services directly on physical infrastructure: supercomputer clusters, GPU instances, Kubernetes environments, inference endpoints, fine-tuning pipelines, all provisioned from the same hardware pool without introducing new dependency layers.

A Heterogeneous Control Plane

Real sovereign AI clusters are not homogeneous. They contain mixed GPU generations from either a single or multiple vendors, mixed network fabrics, mixed storage systems. This is not a failure of planning - it is the inevitable result of procurement cycles, hardware evolution, and the sensible goal of avoiding single-vendor lock-in. The control plane must be designed for this reality from the beginning.

This requires bare-metal provisioning as a base primitive, Kubernetes-native orchestration on top for workload scheduling across hardware classes, and hardware-level isolation components - such as NVIDIA BlueField DPUs - that enforce tenant isolation and root of trust at the network and storage level without depending on hypervisor or software-layer security.

Multi-Site Federation

Sovereign nations have portfolios of locations: defense facilities, research institutions, government data centers, commercial partnerships, each with different security classifications, energy profiles, and administrative jurisdictions. The control plane must federate compute across these sites while respecting data residency and classification boundaries. Workload placement policies must be enforceable at the platform level. Unified observability must provide a single view of utilization, health, and policy compliance across all sites. Scheduling must account for real-time energy availability per site, particularly for behind-the-meter facilities with variable generation profiles.

The critical design principle: every site must be capable of independent operation if connectivity to other sites is severed. Isolation must be the safe failure mode, not the exceptional one.

Tenancy as Policy, Not Compromise

Sovereign AI infrastructure serves multiple national stakeholders simultaneously with radically different security requirements. Building separate infrastructure for each classification level is enormously wasteful and frequently impractical.

The alternative is a unified platform with tenancy enforced at the infrastructure level. Soft tenancy shares GPU nodes between tenants using platform-enforced namespace isolation, appropriate for research and development workloads. Strict tenancy reserves entire GPU nodes for single tenants, appropriate for production government services and regulated environments. Private tenancy provides a fully dedicated single-tenant environment with independent platform management, appropriate for defense, intelligence, and national security missions requiring complete segregation.

These are not organizational conventions. They are architectural properties. A single national AI fleet can support innovation, efficiency, and the most sensitive national security missions simultaneously without duplicating infrastructure.

The Stakes

The Tony Blair Institute puts the urgency in the sharpest terms: "Countries that fail to adopt and deploy AI at scale risk ceding their competitiveness and, ultimately, elements of their sovereignty to those who do." Critically, the same report makes clear that the risk runs in both directions: not just the risk of failing to build AI capability, but the risk of building AI capability that is structurally dependent on foreign control planes - capability that can be withdrawn, degraded, or weaponized by actors outside your jurisdiction.

Nations that invest in the appearance of sovereign AI without the operational reality are not making a neutral choice. They are committing to a dependency that compounds over time, as vendor lock-in deepens, domestic operational capability atrophies for lack of use, and the technical gap between locally-owned infrastructure and the frontier widens.

The nations that get this right - that build genuine operational independence over their AI infrastructure, that achieve the kind of control plane sovereignty that makes the other levels of the Oxford framework mean something - will not merely have competitive advantage in the AI era. They will have the foundational capability from which all other strategic options become available: the ability to train models on their own data, to develop applications that serve their own populations, to partner with international actors from a position of genuine agency rather than managed dependence.

Sovereignty in the age of AI is not a single decision or a single investment. It is a sustained architectural commitment. It is designed, monitored, and maintained. It is not finished.

But it has to start in the right place. Not at the data center. At the control plane.