At Radiant, one of our goals is to provide more compute choices because every AI workload has its unique needs. As demand for AI inference grows, NVIDIA L40S GPU has become a popular choice to serve small to medium sized AI models. This article will help you understand if L40S is the right choice for your workload and how you can deploy this powerful GPU on Radiant AI Cloud.

A versatile GPU that excels at many tasks

Powered by the flexible Ada Lovelace architecture that includes both 4th Gen Tensor Cores and 3rd Gen ray tracing (RT) cores, the L40S is one of the most versatile GPUs in the NVIDIA lineup. While the Tensor cores and Deep Learning Super Sampling (DLSS) help deliver strong AI and data science performance, the RT cores enable photorealistic rendering, enhanced ray tracing, and powerful shading makes this data center GPU also suitable for professional visualization workloads.

Here’s a snapshot of the NVIDIA L40S specifications:

Consider NVIDIA L40S for these use cases

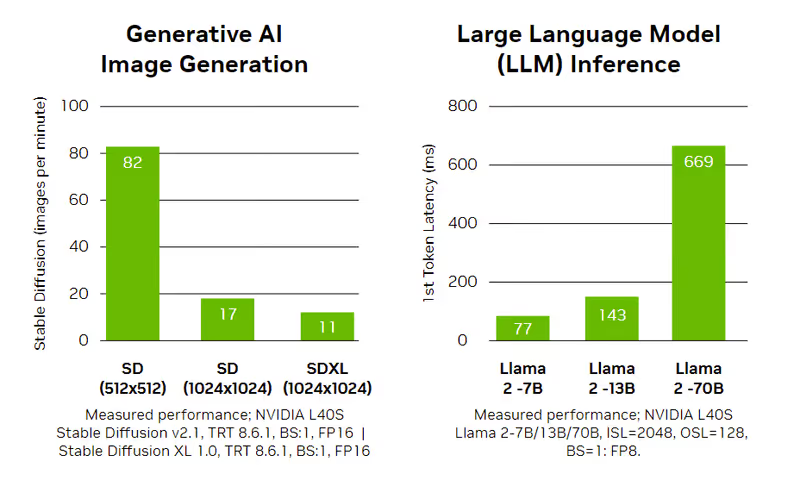

- Affordable AI inference, especially for small and medium sized LLMs, and multimodal models, with L40S GPUs being 28% and 40% cheaper than A100 and H100 PCIe respectively. Here are some examples of multimodal models (Llama 3.2 11B Vision, Flux Schnell) that run smoothly on L40S GPUs.

- Train or finetune small AI models, where L40S delivers strong computational power without the need for extensive capabilities of heavyweight GPUs like H100.

- Perfect for graphics-intensive applications requiring advanced rendering and real-time processing. Equipped with 3rd Gen RT Cores, that deliver up to twice the real-time ray-tracing performance of the previous generation, the L40S GPU from NVIDIA enables breathtaking visual content and high-fidelity creative workflows, from interactive rendering to real-time virtual production.

- A versatile mix of visual and general-purpose computing makes it perfect for a wide range of uses, like product engineering, architecture, construction, virtual reality (VR), and augmented reality (AR).

- When you need strong performance but don’t require multiple GPUs for large parallel tasks. The L40S features higher onboard memory (48 GB) than several NVIDIA GPUs such as V100, V100S, and L4, making it capable of handling comparatively larger models.

NVIDIA H100 vs L40s specifications

*With Sparsity

AI Inference: NVIDIA L40S vs H100

Llama 2 Performance & Cost Comparison

*Source: NVIDIA**Tensor Parallelism = 1, FP8, TensorRT-LLM 0.11.0 Framework***The performance numbers are based on benchmarks from NVIDIA. Actual performance numbers may vary depending on a variety of factors such as system configuration, form factor, operating environment, and type of workloads. We encourage users to run their own benchmarks before deciding on GPUs for more extensive workloads.

How does NVIDIA L40S compare with NVIDIA A100

Although both of these GPUs are a fantastic choice for inference, the A100 GPU can also double as a training-ready GPU for deep learning and HPC workloads. A100’s superior memory specifications and support for multiple GPUs, gives it more range. A100 GPUs also support fractional instances, making them equally flexible for smaller workloads. Here’s a quick rundown of A100 vs L40S specifications

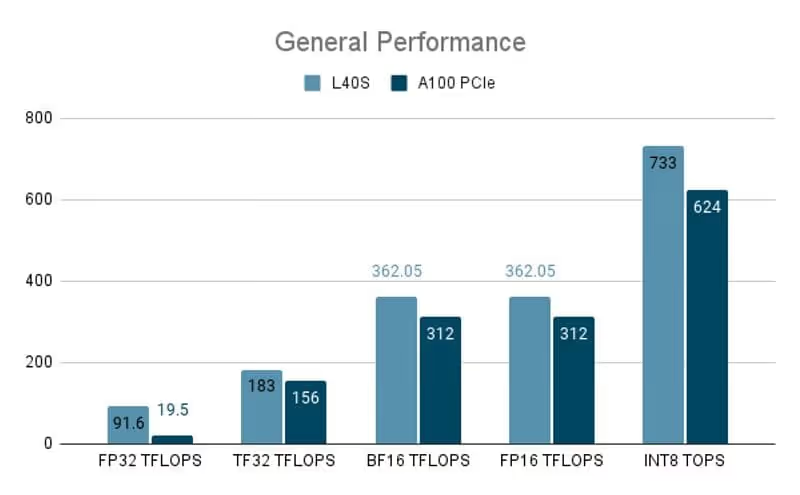

In terms of sheer performance, L40S has an edge with up to 4.7x (FP32 TFLOPS) higher performance. However, for many workloads GPU memory (VRAM) is as important as the processing power, especially as models grow larger in terms of parameters, where A100 shines with its larger onboard memory.

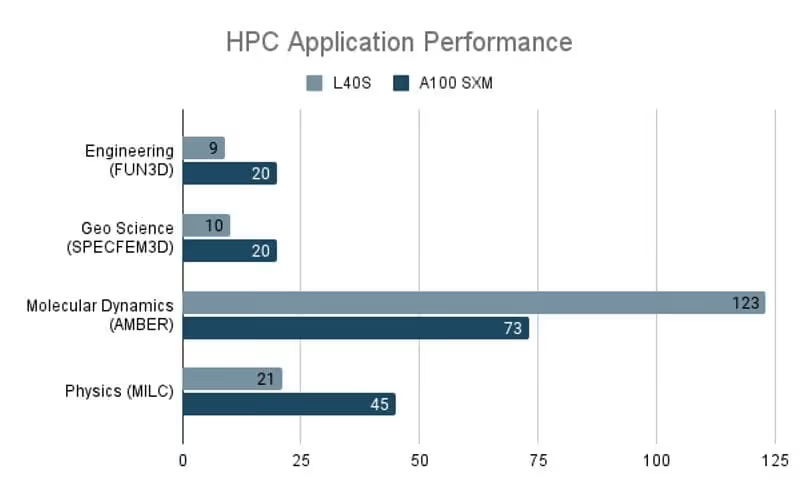

The NVIDIA A100, with its support for 64-bit formats, strong fp64 performance and larger memory capacity outperforms the L40S for HPC workloads.

Source: NVIDIA

Get started with NVIDIA L40S today

The NVIDIA L40S GPU offers a versatile balance of AI inference performance and 3D rendering capabilities, making it ideal for small to medium AI models, graphics-heavy applications, and multimodal models. It is a cost-effective alternative to the H100 and A100 GPUs for smaller inference tasks, while being well-suited for AI and content creation workloads.

Deploy NVIDIA L40S on Radiant in any of these modes:

- GPU instances, on-demand virtual machines backed by top-tier GPUs to train, finetune and serve AI models.

- Serverless Kubernetes helps you run inference at scale without having to manage infrastructure.

- Inference Endpoints deliver production-grade AI inference: fast, elastic and globally distributed.