NVIDIA’s new open source AI model, Nemotron 3 Super marks a significant development in large language model (LLM) deployment, specifically targeting a crucial bottleneck. This 120-billion-parameter model addresses the common dilemma of choosing between expensive, highly capable dense models and fast, less accurate small models. By operating with the computational footprint of a 12B model while delivering advanced reasoning abilities, Nemotron 3 Super promises greater efficiency for increasingly complex workloads.

The Nemtron Super announcement is timely, as multi-agent AI systems and LLMs are rapidly transforming how enterprise software platforms handle long-horizon tasks like software engineering, cybersecurity triaging, and complex research. Although these orchestrated multi-agent applications open up new use cases and speed up workflows, they introduce a steep cost challenge: autonomous agents can generate up to 15 times the token volume (15x more tokens) of standard chat interactions. This increase, often referred to as the "thinking tax," along with massive context explosion and potential goal drift, threatens the cost-effectiveness of deploying agentic AI in production.

To maximize the efficiency of token generation, Nemotron 3 Super utilizes a hybrid Mamba-Transformer Mixture-of-Experts (MoE) architecture optimized for deep reasoning. The Mamba layers efficiently process massive sequences (up to a 1m-token context window, with 256k context also supported), while the Transformer layers are selectively engaged for complex reasoning. Furthermore, it introduces Latent MoE with router-layer fine-tuning by activating four expert specialists for the cost of one and Multi-Token Prediction (MTP), which predicts multiple future words simultaneously to drastically accelerate inference and enable long-form generation.

Here is a summary of the Nemotron 3 Super specifications:

By activating only a fraction of its parameters per token, Nemotron 3 Super delivers the "brainpower" of a frontier model while fundamentally changing the cost curve for long-context agentic work. This makes it ideal for tool calling use-cases, data science workflows, semiconductor design automation, molecular understanding in drug discovery, deep literature search, and AI-native companies looking to automate workflows without the overhead of proprietary models. The model can be fine-tuned locally for domain-specific applications.

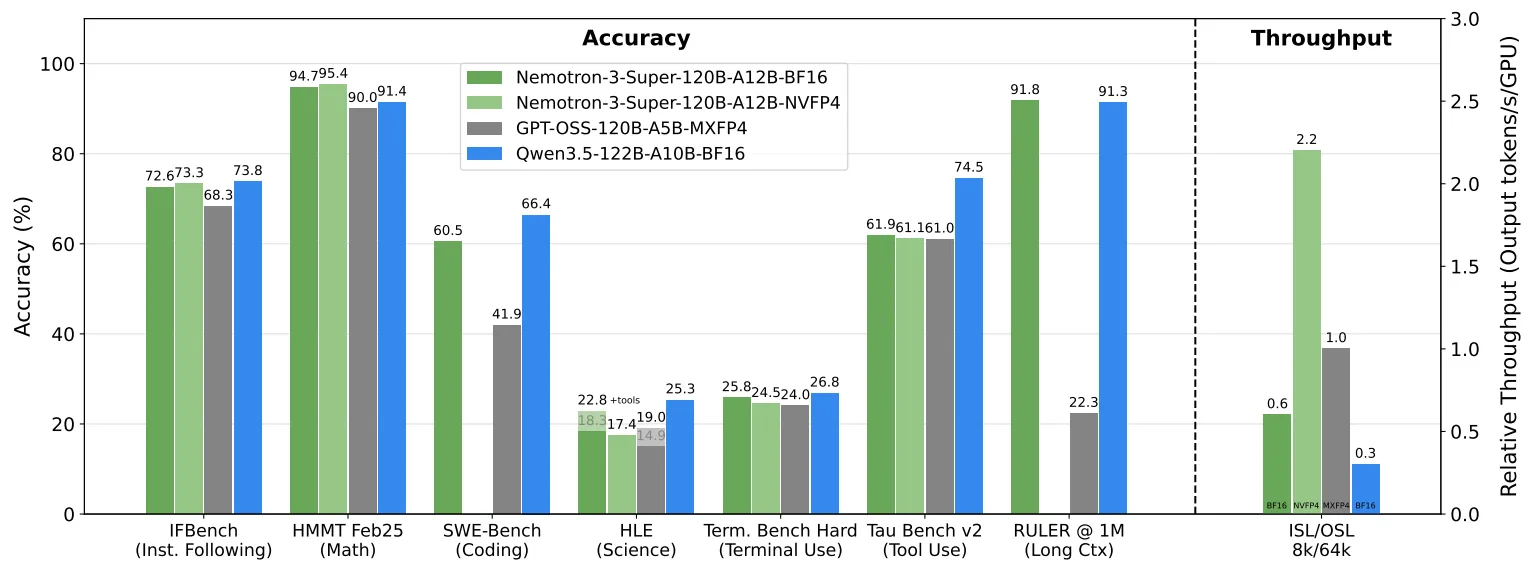

Nemotron 3 Super outperforms models such as Qwen 3 and gpt-oss-120b in reasoning tasks and is also significantly faster (2.2x throughput of gpt-oss-120b and 2x of qwen3-122b). This massive speed advantage stems from its architectural trifecta: while OpenAI’s open-source models suffer from the quadratic compute costs of standard Transformers, Nemotron's Mamba layers process long sequences with linear efficiency. When combined with Latent MoE (routing to multiple experts without the usual compute penalty) and MTP (generating several words simultaneously), the model effectively bypasses the memory bandwidth bottlenecks that throttle its peers.

How to run Nemotron 3 on an H100 GPU

Prerequisites

To get started, create a GPU virtual machine (VM) on Radiant AI Cloud.

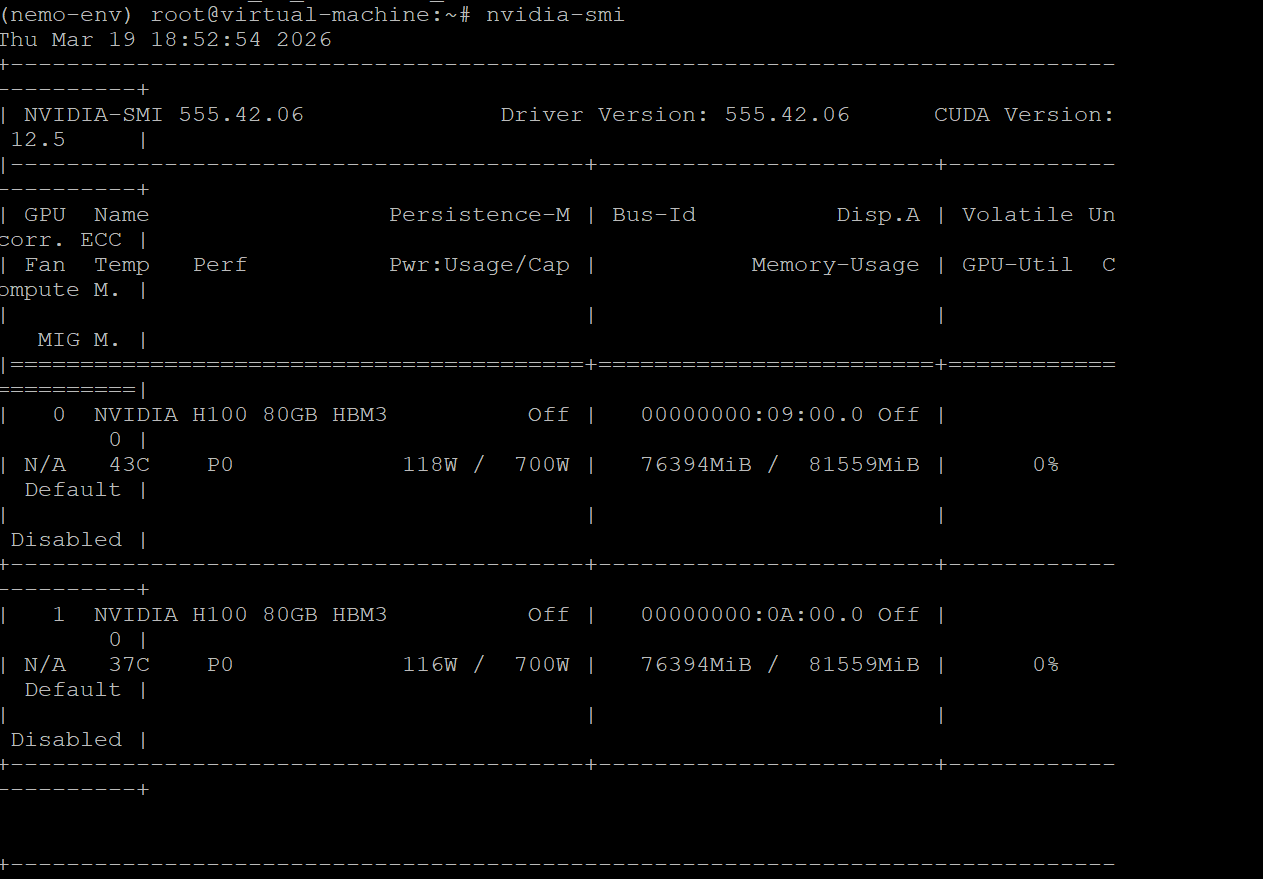

We have selected 2x NVIDIA H100 GPUs for this tutorial as it is a strong combination of availability and cost-efficiency in the market. While upgrading to H200 or B200 hardware would unlock superior performance and larger context windows, the steps outlined in this guide remain consistent across these architectures. We’ll be running the FP8 model for this tutorial.

Quick Tip: Use the initialization script during VM creation to pre-install NVIDIA CUDA drivers, PyTorch.

Step 1: SSH into your VM and set up the environment

apt install python3.12-venv

python3.12 -m venv nemo-env

source nemo-env/bin/activateStep 2: Install the latest vLLM

pip install -U vllm==0.17.1 torch==2.10.0 flashinfer-python==0.6.4 flashinfer-cubin==0.6.4 'nvidia-cutlass-dsl>=4.4.0.dev1' --extra-index-url https://download.pytorch.org/whl/cu128Step 3: Download the Nemotron 3 Super Parser

wget "https://huggingface.co/nvidia/NVIDIA-Nemotron-3-Super-120B-A12B-FP8/resolve/main/super_v3_reasoning_parser.py"Step 4: Run the vLLM server

We’re serving nvidia/NVIDIA-Nemotron-3-Super-120B-A12B-FP8 via port # 8000 and setting the tensor parallelism to 2 for the dual GPUs used.

vllm serve nvidia/NVIDIA-Nemotron-3-Super-120B-A12B-FP8 \

--async-scheduling \

--dtype auto \

--kv-cache-dtype fp8 \

--tensor-parallel-size 2 \

--pipeline-parallel-size 1 \

--data-parallel-size 1 \

--swap-space 0 \

--trust-remote-code \

--attention-backend TRITON_ATTN \

--gpu-memory-utilization 0.9 \

--enable-chunked-prefill \

--max-num-seqs 512 \

--served-model-name nemotron \

--host 0.0.0.0 \

--port 8000 \

--enable-auto-tool-choice \

--tool-call-parser qwen3_coder \

--reasoning-parser-plugin "./super_v3_reasoning_parser.py" \

--reasoning-parser super_v3Here’s a snapshot of the GPU instance to show memory usage of about 76GB VRAM each

Step 4: Test the model with cURL

You can interact with the model using curl

curl http://VM-IP:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model":"nemotron",

"messages":[{"role": "user", "content": "Explain Jevon'\''s Paradox in a single sentence"}],

"chat_template_kwargs": {"enable thinking": false}

}' | jq -r '."choices"[0]."message"."content"'

Step 5: Install Jupyter Notebook for ease of interaction and run OpenAI Python SDK

pip install notebook

jupyter notebook --allow-root --no-browser --ip=0.0.0.0

from openai import OpenAI

client = OpenAI(

base_url="http://VM-IP:8888/v1",

api_key="EMPTY"

)

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "5.9 - 5.11"}

]

response = client.chat.completions.create(model="nvidia/NVIDIA-Nemotron-3-Super-120B-A12B-FP8", messages=messages, extra_body={"chat_template_kwargs": {"enable_thinking": False}})

print(response.choices[0].message.content)

How fast is Nemotron Super?

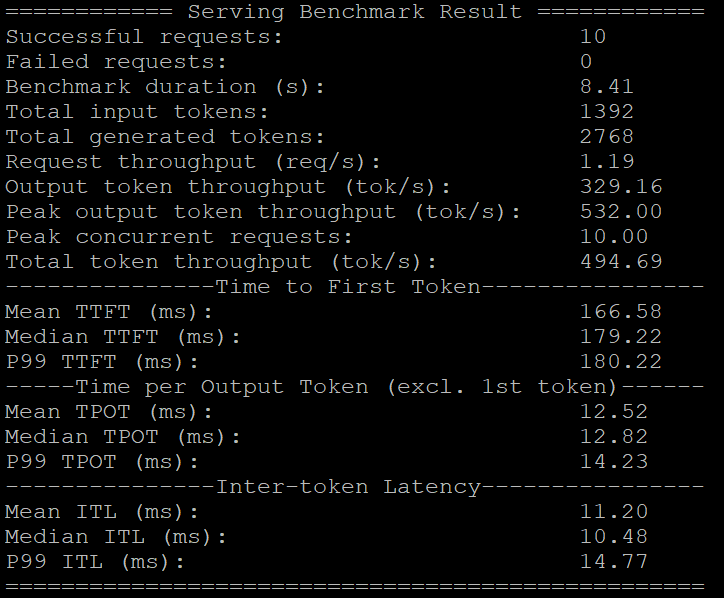

We benchmarked Nemotron 3 Super by using the vllm bench serve command. The mean output token throughput was 329 tokens per second, demonstrating excellent inference performance, especially when compared to the 158 tokens/s throughput we observed with gpt-oss-120b and 223 tokens/s with Nemtron 3 Nano in our previous tests.

Initial Impressions

We ran a few standard tests to see how Nemotron 3 Super handles common logic and coding prompts, including math reasoning tasks.

Prompt: How many 'r's in “strawberry”?

Nemotron Super:

Prompt: How many 'l's in “strawberry”?

Nemotron Super: The model got this right, unlike our previous experience with Nemotron Nano.

Mathematical Reasoning:

Prompt: Find all saddle points of the function $f(x, y) = x^3 + y^3 - 3x - 12y + 20$.

Nemotron Super: The model correctly applied the second derivative test and identified the saddle point without errors in the calculation steps.

Prompt: Compute the area of the region enclosed by the graphs of the given equations “y=x, y=2x, and y=6-x”. Use vertical cross-sections

Nemotron Super: Again, Nemotron Super beat the Nano version with the correct answer, 3.

Code Generation:

Prompt: We asked for Python code for the Snake game. Nemotron Super got it right at the first try with a perfect simulation of the game. Here’s a snapshot of the game

Prompt: Create an SVG of a smiling dog

Nemotron 3 Super was able to create the SVG correctly on the first pass.

Altogether, Nemotron 3 Super is a high-performance open source model . NVIDIA has demonstrated that smarter routing through larger, sparse hybrid architectures can deliver frontier performance with the nimbleness of smaller models.

Build Big on The AI Cloud Designed for Abundance

For organizations that are deploying advanced MoE models like Nemotron 3 Super requires infrastructure engineered for speed, control, and scale. Radiant’s comprehensive AI Cloud allows you to train faster, fine-tune easily, deploy anywhere, and operate with total control.

- GPU Instances: Launch top-tier, pre-configured NVIDIA GPU instances in seconds. With suspend-and-resume capabilities for idle workloads, you can reduce costs by up to 80% compared to traditional hyperscalers.

- Supercomputers: Provision multi-node clusters of thousands of best-in-class NVIDIA GPUs connected by high-speed InfiniBand or RoCE networking for massive distributed training.

- Inference Delivery Network (IDN): Deploy models globally with our model-routing layer that minimizes latency and enforces data-sovereignty. Choose between Serverless Endpoints for scale-to-zero flexibility or Dedicated Endpoints for strict isolation and predictable performance.

- Serverless Kubernetes: Free your ML teams from infrastructure management. Run existing container workflows natively while the platform handles sub-second cold starts and auto-scaling from zero to thousands of GPUs in real time.