Inference is where AI systems meet reality. Latency, throughput, and stability under load determine whether models feel responsive to users or collapse under demand. As AI workloads scale, it is no longer enough to benchmark models in isolation or at low concurrency. What matters is how inference behaves under real production pressure.

This analysis evaluates Radiant’s inference endpoints across three modern large language models, GPT‑OSS‑20B, Gemma 3 27B‑it, and Llama 4 Scout 17B‑16E Instruct, using identical parameters and a consistent workload. The goal is not to crown a theoretical winner, but to understand practical performance, scalability, and failure modes when these models are deployed in production environments.

Evaluation Methodology

All models were tested using CentML’s Flexible Inference Benchmark (FIB) with prompts drawn from a filtered ShareGPT dataset. Inputs were limited to short prompts (1–63 tokens) with completions capped at 100 tokens, creating a decoder‑bound workload that reflects many real‑world chat and assistant use cases.

To ensure fairness and repeatability:

- All endpoints were reset between runs to eliminate caching effects

- Concurrency was increased incrementally to observe saturation points

- Metrics captured included token throughput, request throughput, latency, and request success rates

This approach moves beyond synthetic benchmarks and surfaces how inference endpoints behave as real users pile on.

Baseline Performance: Throughput and Latency Characteristics

At a fixed maximum concurrency of 32, all three models achieved a 100% request success rate, demonstrating stable endpoint availability with no throttling or dropped requests. Performance, however, diverged quickly

Throughput metrics for models.

Latency metrics for different models.

GPT‑OSS‑20B delivered substantially higher throughput, achieving roughly 2.5× the request and token throughput of both Gemma 3 and Llama 4. It also streamed responses faster, sustaining approximately 121 tokens per second per user—more than double the other models.

Gemma 3 27B‑it and Llama 4 Scout 17B‑16E showed similar streaming characteristics, but with significantly longer time‑to‑first‑token (TTFT). Gemma 3, in particular, exhibited the highest TTFT, indicating that prefill computation was a primary bottleneck under these conditions.

Short prompt workloads emphasize token generation efficiency over prefill latency. Under this regime, GPT‑OSS‑20B’s kernel and attention optimizations translated directly into a more interactive, responsive experience.

Concurrency Ramping and Saturation Behavior

Baseline performance captures only part of the picture. In production, systems must absorb traffic bursts and sustain concurrency without degrading user experience. To evaluate this, prompts filtered from the ShareGPT dataset were sent to single-replica endpoints with progressively increasing maximum concurrency. The same prompt set was used for consistency, and endpoints were reset between runs to eliminate caching effects.

As concurrency increases, the serving stack must handle overlapping workloads, larger batches, and growing contention for GPU memory and compute. The point at which requests begin to fail, latency rises, and throughput plateaus or declines marks the threshold where user experience degrades and additional scaling is required.

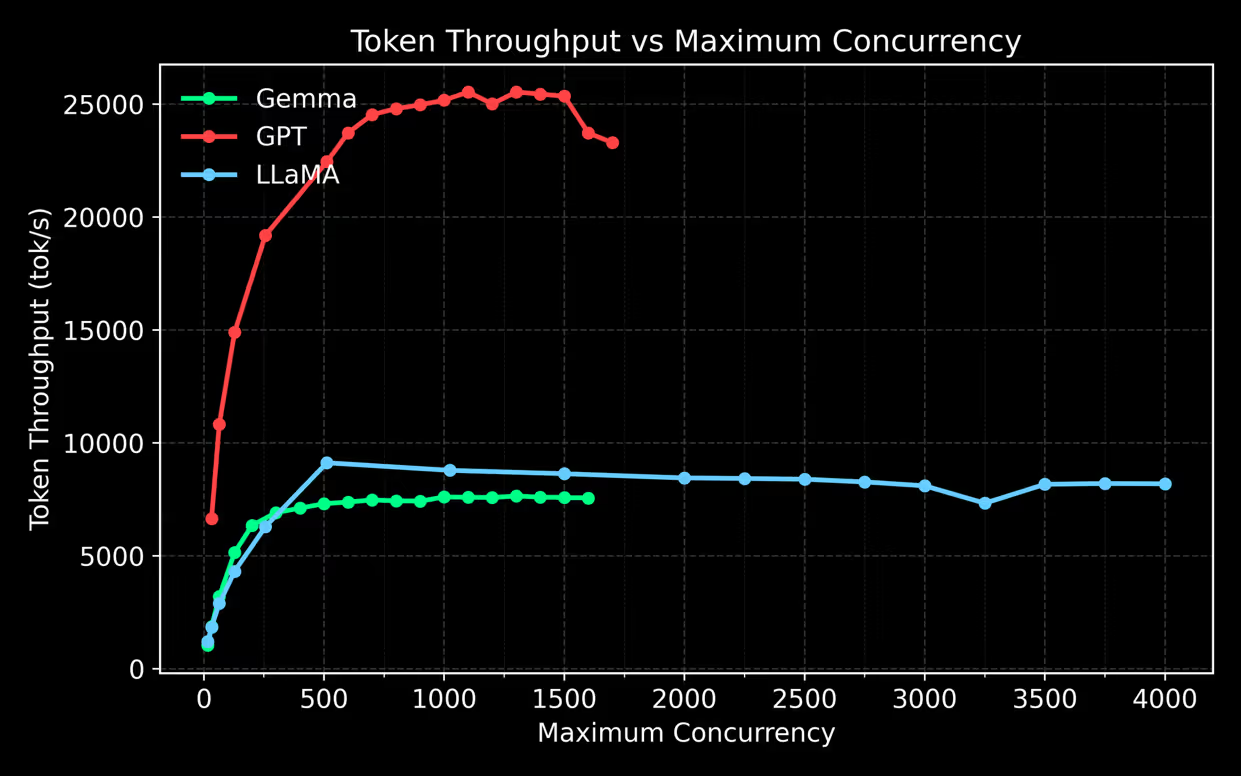

Concurrency ramping used identical inputs (1–63 tokens) and a maximum output length of 100 tokens across GPT-OSS-20B, Gemma 3 27B, and LLaMa 4 Scout 17B-16E Instruct. The results are shown in the graphs below.

Figure 1 shows GPT-OSS outperforming Gemma and LLaMa at concurrencies below 1600. Beyond this point, both Gemma and GPT exhibit degraded response times, with rising instability and batch loss. Although Gemma 27B and GPT-OSS-20B scale sharply at low concurrency, both are single-GPU models, which likely limits their ability to sustain higher concurrent loads relative to LLaMa.

LLaMa, by contrast, is a larger Mixture-of-Experts model spanning four GPUs. This leads to slower initial scaling due to routing and synchronization overhead, but supports acceptable performance and request success rates up to roughly 4000 concurrent in-flight requests. LLaMa peaks early (around 500 concurrent requests) before gradually declining in token throughput, unlike Gemma’s flatter plateau or GPT’s later peak before instability.

From a production perspective, GPT-OSS offers the best balance of throughput, scalability, and stability. Gemma saturates early with the lowest token throughput, while LLaMa trades efficiency for greater concurrency tolerance.

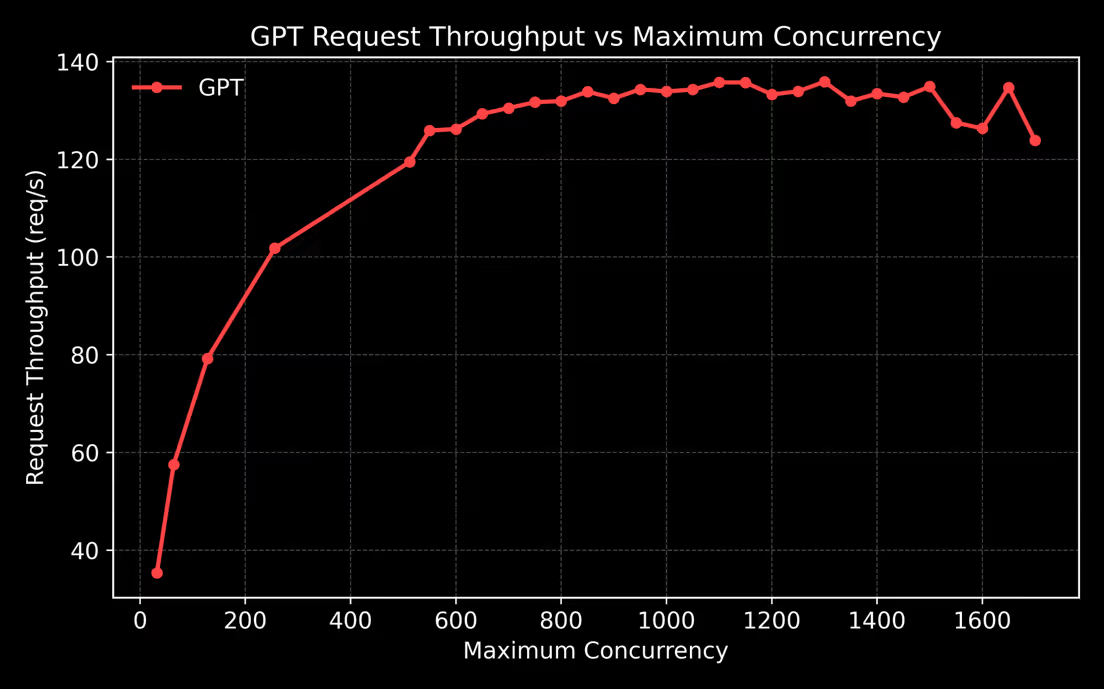

GPT shows the steepest initial scaling, increasing from ~35 req/s at concurrency 32 to over 100 req/s at a maximum concurrency of 256. Throughput then continues to climb, reaching a stable plateau of ~130–136 req/s between ~800 and ~1400 concurrency. The plateau is roughly twice as fast as Gemma’s, indicating superior utilisation of GPU resources and more efficient scheduling. The fluctuations present at high concurrency are due to batches and requests being lost as the service loses stability.

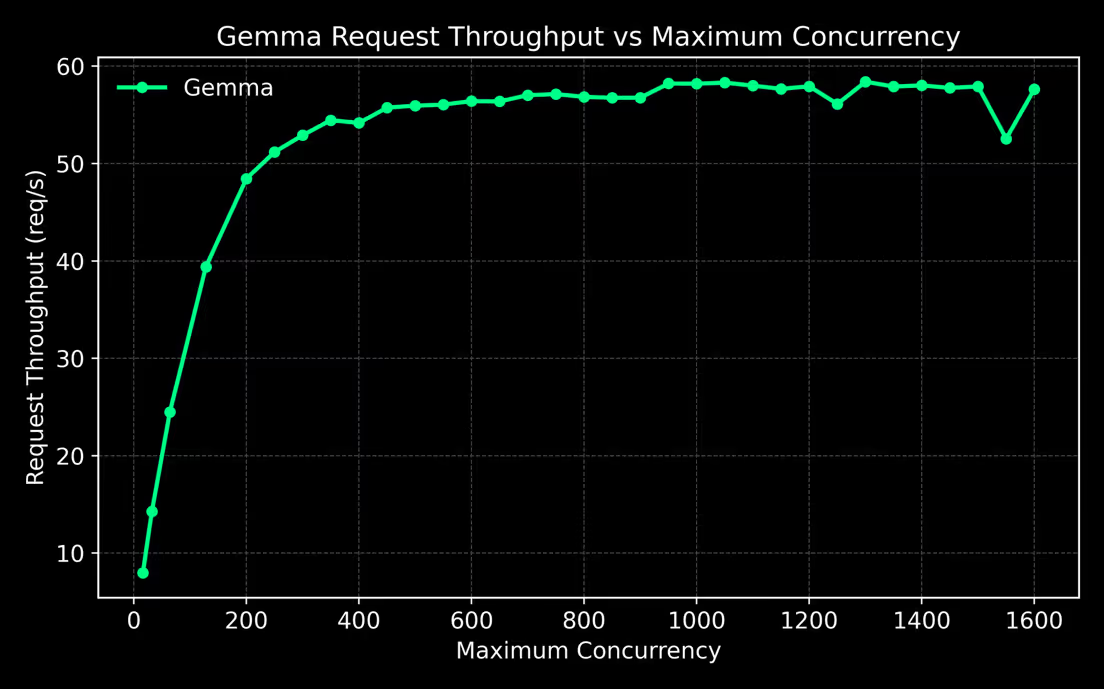

For the Gemma model, request throughput increases from ~8 req/s at concurrency 16 to ~49 req/s at 200, driven by improved batching efficiency. Throughput then rises gradually, reaching a flat plateau of ~56–59 req/s between 400 and ~1400 concurrency, indicating early saturation and limited benefit from additional inflight requests.

Minor fluctuations appear at higher concurrency, including a dip around 1500 concurrency (~53 req/s) caused by request loss. Beyond ~1600 concurrency, throughput becomes unstable and request success rates drop sharply, signaling unreliable operation.

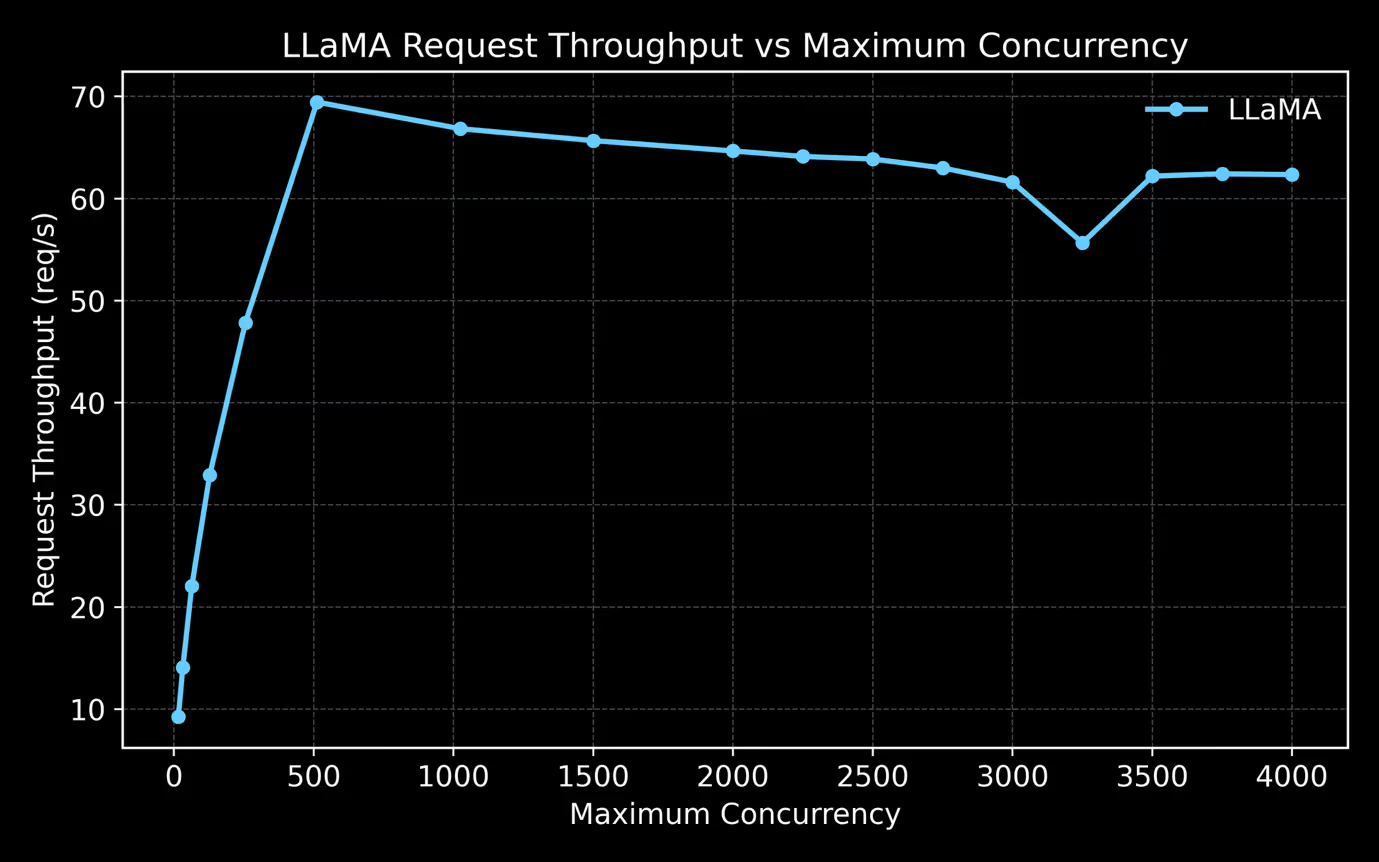

The LLaMA model shows slower scaling at low concurrency, increasing from ~9 req/s at concurrency 16 to a peak of ~70 req/s at ~512 concurrency. Unlike GPT and Gemma, LLaMA reaches its maximum throughput earlier, after which throughput gradually declines. Throughput decreases slowly from ~70 req/s at 512 to ~62–64 req/s in the 1000–2500 concurrency range. A significant drop occurs at 3250 concurrent requests corresponding to one of the runs at that concurrency losing a large batch of requests.

What These Results Mean in Practice

From a production perspective, three insights stand out:

- Throughput alone is not enough. Models can appear performant at low concurrency yet collapse once saturation is reached.

- Concurrency has a breakpoint. Beyond this point, adding users leads to request loss rather than higher throughput.

- Architecture matters. Single‑GPU models deliver excellent efficiency until they don’t. Multi‑GPU MoE models trade peak efficiency for higher concurrency tolerance.

For most production inference deployments, GPT‑OSS‑20B provided the best balance of throughput, scalability, and stability within a single‑replica setup. Gemma 3 saturated too early for high‑load scenarios, while Llama 4 favored concurrency tolerance over raw efficiency.

Deliver Real-time Inference at Scale with Radiant’s Inference Delivery Network

These results underscore a broader truth: model choice is only half the equation. The model serving stack which includes scheduling, batching, isolation, and orchestration, ultimately determines how much usable performance and economic value can be extracted from GPU infrastructure under real load. In production environments, inefficiencies at this layer compound quickly, translating into unstable latency, wasted capacity, or premature scaling.

Radiant’s inference platform is designed with infrastructure-grade discipline to address these realities. It delivers predictable performance under sustained concurrency, exposes clear saturation points to inform replica and capacity planning, and maintains high GPU utilization without compromising stability or user experience. This combination enables sovereign entities, enterprises, telcos and AI builders to move beyond best-case benchmarks and deploy inference systems that remain reliable, efficient, and economically defensible as demand scale. Talk to an Inference Infrastructure Expert

%202.avif)

.png)